- 01.Background: AI-RAN and the Expansion of External AI Demand

- 02.Challenge: Advancing Internal AI Control and the Limits of Resource Utilization Efficiency

- 03.Overview of the AITRAS Orchestrator

- 04.Architecture for Integration with External AI Platforms

- 05.Operational Sequence: Execution of External AI Workloads

- 06.Future Outlook: Evolving into Infrastructure That Maximizes Value

- Blog

- Wireless, Network, Computing

Integration of External AI Workloads in AI-RAN Implementation of Dynamic Resource Control with the AITRAS Orchestrator

#AI-RAN #AITRAS

Mar 04, 2026

SoftBank Corp.

The rapid rise of generative AI and inference-based AI has driven an unprecedented demand for compute resources. Telecommunications infrastructure is no exception; initiatives such as AI-assisted RAN (Radio Access Network) control, advanced network operations, and real-time edge inference are rapidly advancing.

In this technical blog, we will discuss a mechanism that enables the integrated execution of external AI workloads on an AI-RAN platform through an extension of the AITRAS Orchestrator compliant with the AI-RAN Alliance reference architecture. From a technical perspective, we provide a detailed discussion of the control loops and orchestration design required to incorporate external AI demand while maintaining stable internal AI processing and RAN control.

1. Background: AI-RAN and the Expansion of External AI Demand

AI-RAN is not merely a combination of RAN and AI; it represents a broader architectural concept where communication functions and AI workloads coexist and cooperate on the same infrastructure.

In this blog, we focus specifically on the "AI and RAN" approach, where both domains share the same compute resources and dynamically adjust based on their respective characteristics and priorities. Adopting this premise enables AI processing to run on the same platform while maintaining communication quality. However, because both domains rely on the same compute resources, managing fluctuations in demand and differing priority becomes a critical design challenge. Furthermore, with the increasing generalization of AI processing platforms in recent years, there is growing momentum toward more flexible use of telecommunications operators’ edge and data center infrastructure.

2. Challenge: Advancing Internal AI Control and the Limits of Resource Utilization Efficiency

As previously discussed, under the "AI and RAN" premise, communication functions and AI workloads share the same compute resources. To date, the AITRAS Orchestrator has managed priority control and resource allocation to ensure stable AI processing without compromising communication quality.

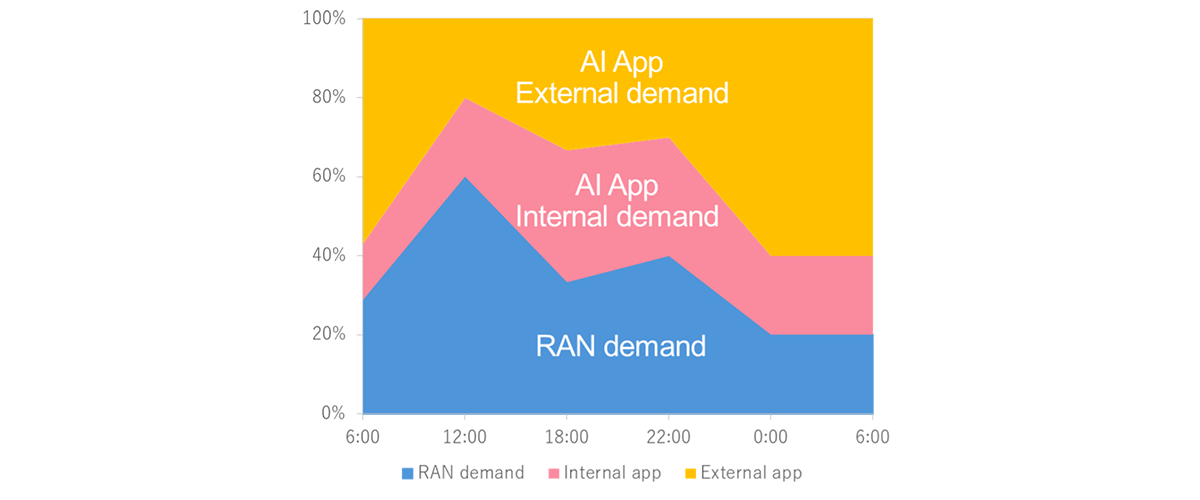

However, in an architecture where RAN and AI coexist on the same infrastructure, their demand characteristics often peak temporally. Particularly when handling demand within a single country, peak traffic periods require both communication functions and AI processing to consume significant resources, leading to congestion. Conversely, during off-peak hours such as nighttime, these resources tend to become underutilized.

Even with a successful "AI and RAN" integration, relying solely on internal workloads limits the ability to balance these temporal shifts in demand. To address this, it is essential to incorporate external AI workloads during periods of surplus capacity. This improves overall compute resource utilization and leads to more efficient infrastructure operation.

However, achieving this requires an orchestration mechanism capable of holistically evaluating and dynamically controlling both internal and external AI workloads with minimal impact on RAN control. AITRAS is designed to serve as this comprehensive control platform, overseeing and optimizing the entire AI-RAN ecosystem.

Figure 1. Time-Based Changes in Compute Resource Utilization in AI-RAN

3. Overview of the AITRAS Orchestrator

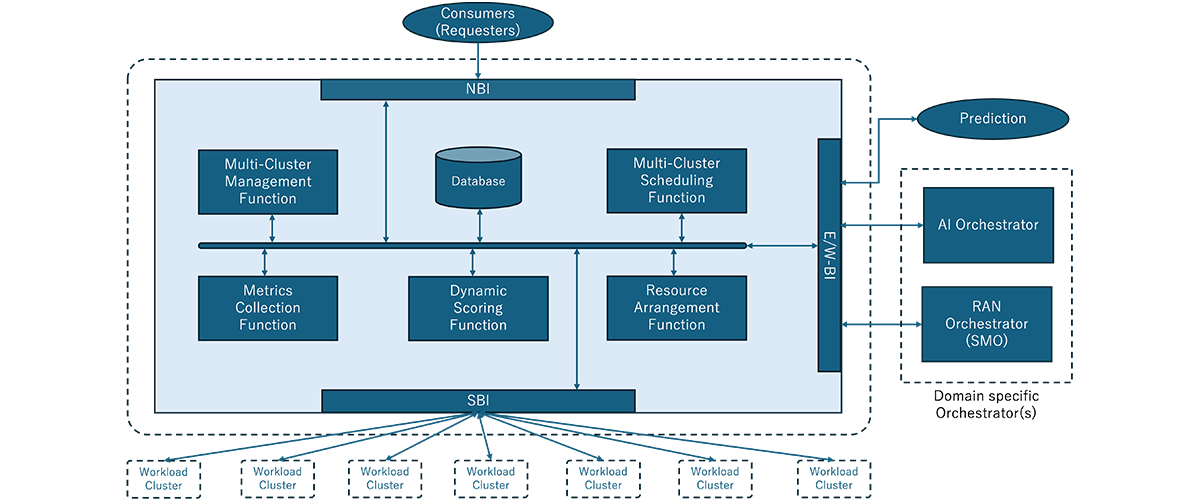

The AITRAS Orchestrator serves as the core control mechanism for the integrated management for the entire AI-RAN ecosystem, including AI for RAN, AI on RAN, and AI and RAN. It provides real-time, cross-domain visibility into compute resource demands for both RAN control and AI processing, ensuring optimal resource allocation based on current operational conditions.

By analyzing monitoring data and predefined policies, the orchestrator allocates resources while balancing the priorities and constraints of each workload. The integration of external AI workloads helps mitigate the temporal imbalances in resource utilization that previously led to inefficiencies.

During peak hours, the system prioritizes RAN and internal AI workloads to ensure communication quality and system stability. Conversely, during off-peak periods such as nighttime, external AI workloads are onboarded to leverage surplus capacity. This strategy ensures more efficient utilization of compute resources on the shared infrastructure.

As a result, the system can dynamically adapt to time-based fluctuations in demand, executing AI processing while minimizing any impact on RAN control.

4. Architecture for Integration with External AI Platforms

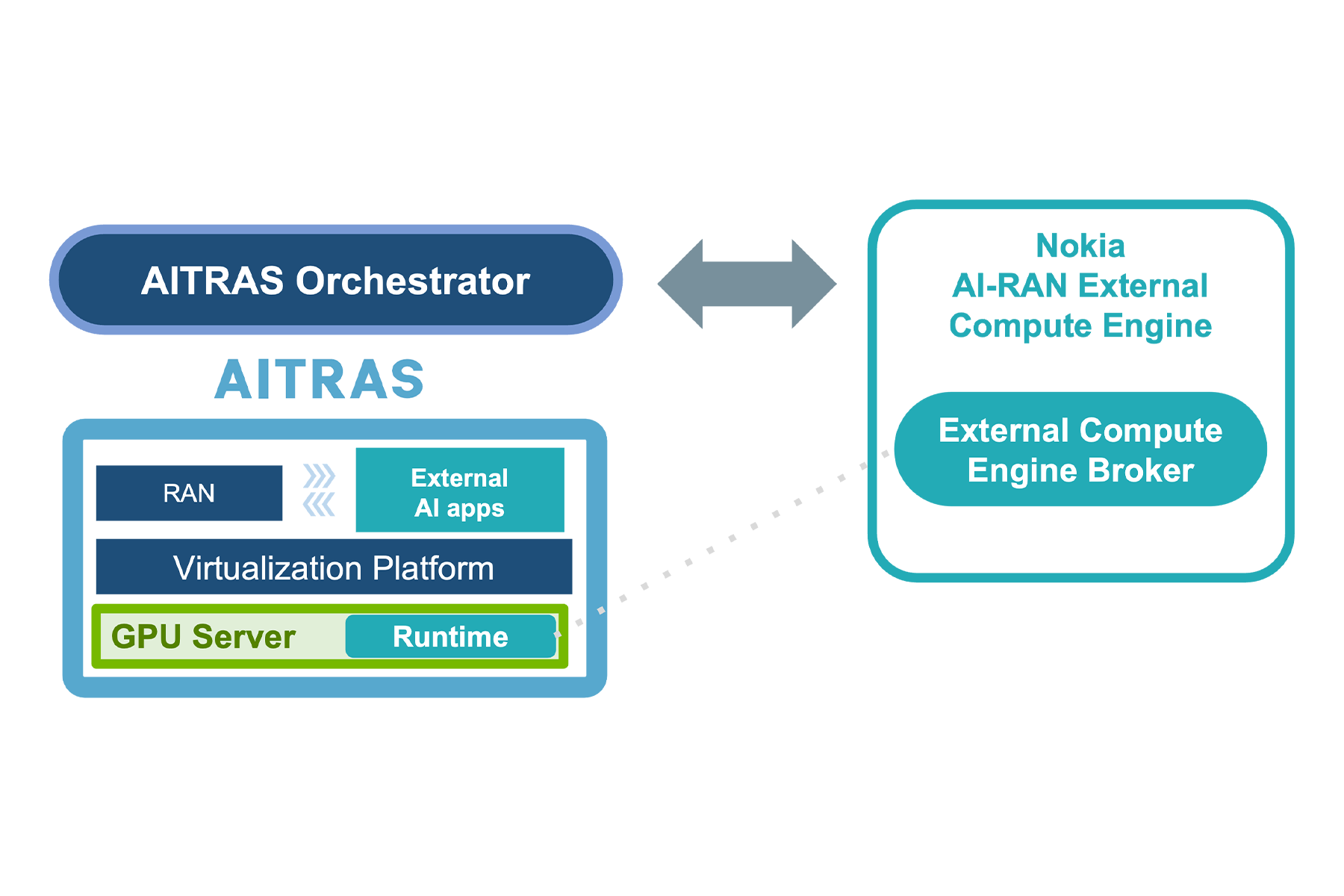

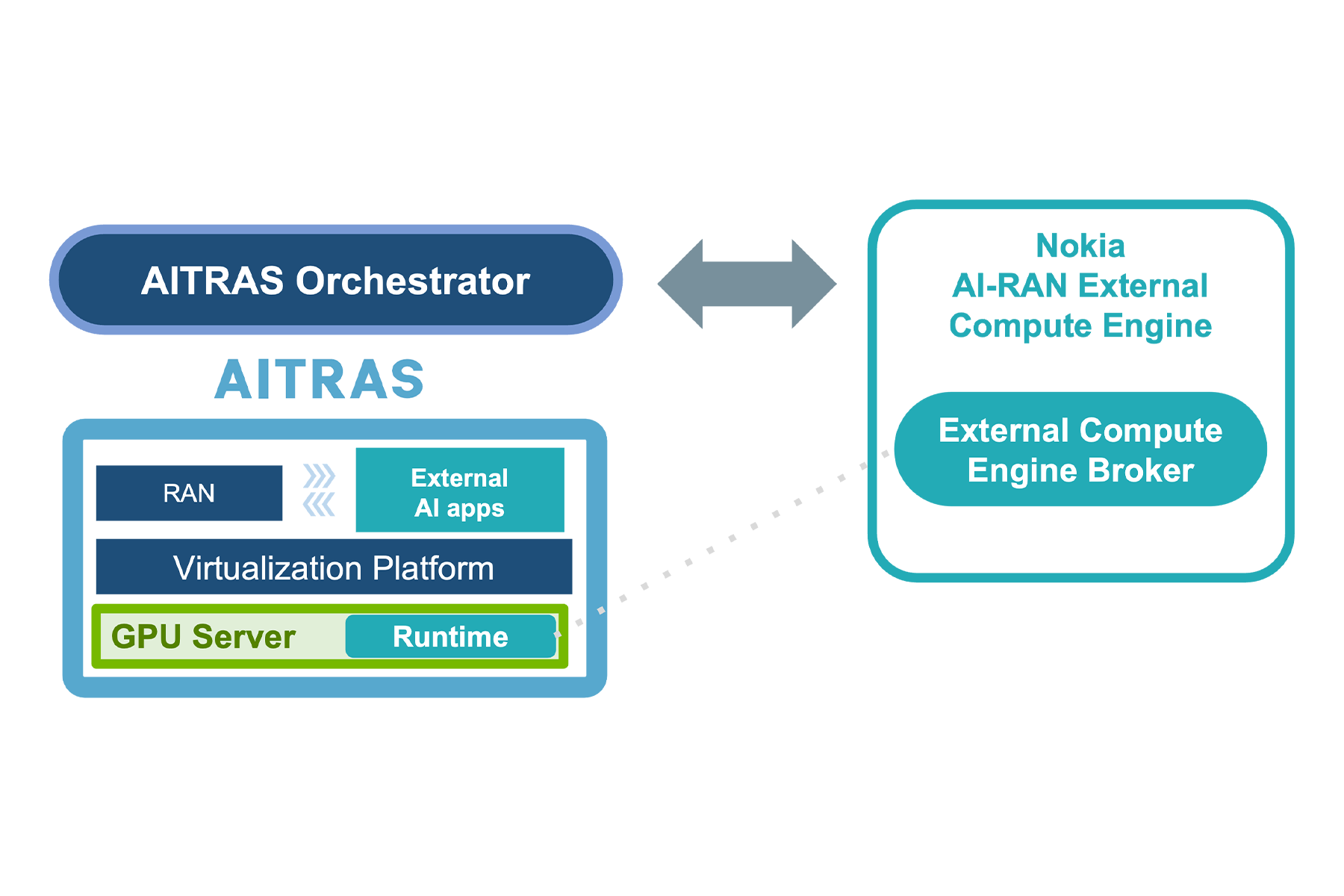

In this configuration, the external AI platform acts as a broker-based AI orchestration foundation that mediates between multiple AI workloads and compute resource providers. For example, at MWC26 we showcased interoperation with Nokia Bell Labs’s AI platform, Nokia AI-RAN External Compute Engine. Within the AI-RAN Alliance reference architecture, this "AI Orchestrator" is responsible for workload intake, defining execution requirements, and selecting and allocating execution environments.

The edge and data center clusters managed by the AITRAS Orchestrator provide compute resources to this external AI platform. The external platform perceives the AITRAS-controlled clusters as a single, abstracted resource pool, utilizing them based on the required capacity and specific constraints provided by the orchestrator.

A key feature of this integration is the clear separation of responsibilities. The AITRAS Orchestrator determines resource availability by prioritizing RAN control stability and internal AI requirements. Information regarding resource requests and execution status is exchanged between the AITRAS Orchestrator and the external AI platform, ensuring that control remains decoupled from the complexities of external tenant management.

This broker-based integration model enables the provision of compute resources without being directly coupled to individual AI applications. Consequently, the orchestrator can focus on its primary mission—balancing RAN control and internal AI priorities—while efficiently leveraging surplus capacity for external AI workloads during off-peak periods.

Figure 2. System Architecture of AI-RAN Orchestrator

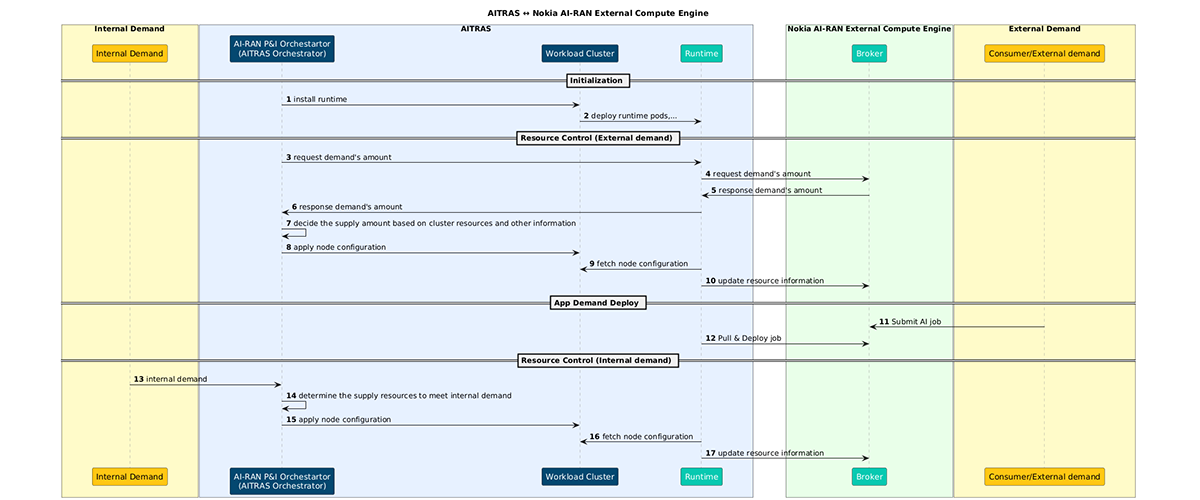

5. Operational Sequence: Execution of External AI Workloads

The integration of external AI workloads is designed as a continuous control loop based on constant monitoring and periodic scheduling within the "AI and RAN" environment. This process follows a clear path: Visibility → Evaluation → Decision-making → Configuration.

Figure 3. Interaction Sequence between the AITRAS Orchestrator and the External AI Platform

1. Demand Visibility(Continuous Monitoring)

The system constantly monitors the demand and priority of RAN control and internal AI workloads. Resource metrics such as GPUs, utilization and allocation status are tracked in real-time, providing continuous visibility into surplus capacity. Simultaneously, demand from external AI workloads is also collected to form the data foundation for all orchestration decisions.

2. Periodic Scheduling and Value Evaluation

Periodic scheduling is executed based on the updated internal and external demand data. At this stage, the system evaluates whether compute resources can be allocated for external provisioning.

While the current criteria primarily focus on the availability of GPU resources, future iterations are envisioned to incorporate a holistic value assessment—balancing internal metrics, such as communication quality and operational efficiency, with the business value generated through external provisioning.

3. Decision on External Provisioning

If the evaluation confirms available capacity, the available capacity is presented to the external AI platform (AI Orchestrator). The volume of resources provided is determined dynamically based on the current demand profile.

4. Execution (Cluster Configuration via GitOps)

The selected policy is reflected as a configuration update in a Git repository. Role definitions (e.g., RAN-prioritized, general-purpose, GPU-dedicated), taints, and scheduling policies are updated and applied to the cluster through a GitOps workflow.

In this context, "execution" does not involve direct manipulation of nodes or workloads at runtime. Instead, the "desired state" is updated declaratively through configuration changes. This approach allows the external AI acceptance policy to be applied to the infrastructure with high safety and reproducibility, maintaining the Git repository as the single source of truth.

These four steps above function as a continuous control loop. Real-time updates from monitoring are cyclically integrated into periodic scheduling, evaluation, and GitOps-based policy reflection. This ensures that the balance between internal and external demand is reflected in the next scheduling cycle, where provision volumes and acceptance policies are reassessed.

By integrating demand visibility, evaluation, and execution into a unified control loop, AITRAS continuously adapts to demand fluctuations within the "AI and RAN" environment. This collaboration with external AI platforms transforms the AI-RAN infrastructure into a unified foundation, capable of handling both internal and external AI workloads in a truly integrated manner.

6. Future Outlook: Evolving into Infrastructure That Maximizes Value

In this article, we have discussed the technical background and control architecture for external AI integration within the AI-RAN environment, centered on the AITRAS Orchestrator. Real-time demand visibility and flexible resource control are essential elements for maximizing compute resource utilization.

Looking ahead, the role of orchestration will extend beyond simply deciding whether to accept external AI workloads. It will evolve to evaluate the "value" generated by both internal and external AI workloads, selecting the options that deliver the greatest overall impact. This involves a comprehensive assessment that weighs internal value—such as communication quality and operational efficiency—against the business value created through external provisioning.

Through such value-oriented orchestration, AITRAS will evolve AI-RAN infrastructure from a mere execution platform into an "infrastructure that maximizes value." By dynamically optimizing the balance between internal utilization and external provisioning, we aim to maintain the essential stability of telecommunications infrastructure while fully unlocking its computational potential.