- Blog

- Wireless, Network, Computing

SoftBank Corp.’s Initiatives Toward the Social Implementation of Physical AI

#AI-RAN #AITRAS #PhysicalAI

Mar 23, 2026

SoftBank Corp.

The rapid evolution of generative AI has significantly enhanced the “thinking” capabilities of Large Language Models (LLMs). What would happen if this intelligence could autonomously act in the physical world? How would society change?

This article introduces “Physical AI,” an initiative led by the SoftBank Research Institute of Advanced Technology, along with examples of AI-RAN and AITRAS, which serve as its foundational infrastructure.

To begin, please watch the video below for a vision of the future of Physical AI envisioned by SoftBank Corp.

1. What is Physical AI?

Physical AI is a framework in which AI understands and interprets information obtained from sensors and cameras, and reflects the results in the physical actions of robots and other devices. While generative AI has primarily handled intelligent processing in digital spaces, Physical AI extends that intelligence into actions in the real world.

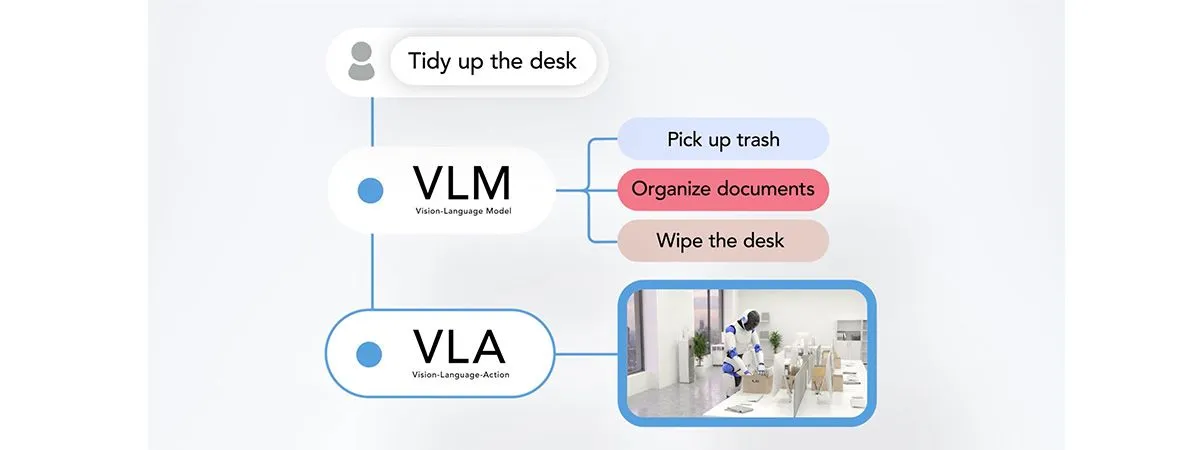

At the core of this concept are two foundational AI models: VLM (Vision-Language Model) and VLA (Vision-Language-Action). A Vision-Language Model (VLM) is an AI model that understands visual inputs such as camera images and sensor data, and interprets what actions should be taken. It decomposes high-level, abstract instructions into multiple sub-tasks. A Vision-Language-Action (VLA) model receives these sub-tasks and converts them into concrete robotic actions. By utilizing visual feedback, it continuously optimizes motion trajectories and object manipulation (such as grasping) in real time, executing them as seamless, continuous actions.

Through this coordination, Physical AI can respond even to ambiguous instructions such as “tidy up the desk,” enabling flexible behavior in dynamic environments.

2. Why AI-RAN / MEC is Necessary

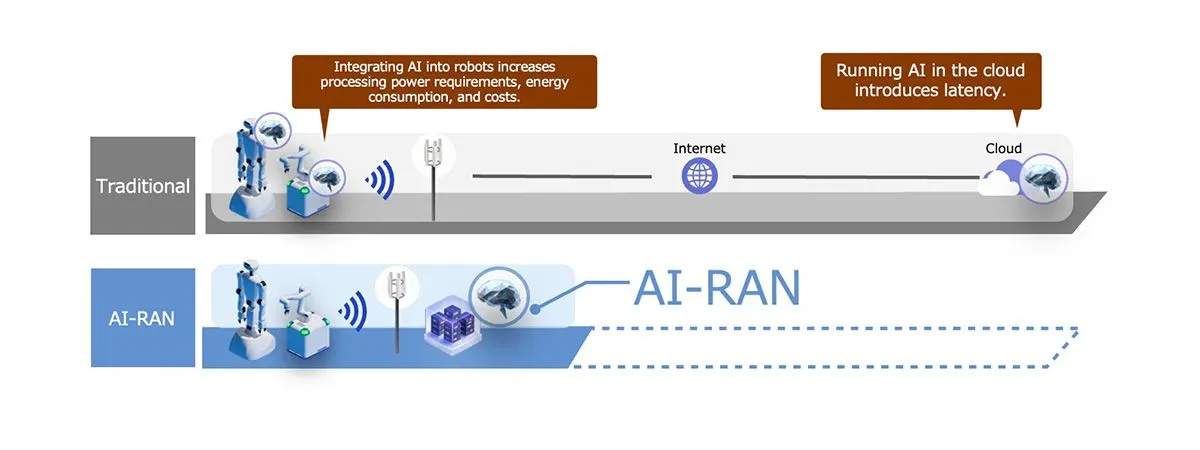

Physical AI requires advanced decision-making, which inevitably leads to increasingly large AI models. As a result, a single robot cannot process all computations independently. Traditionally, processing within the robot enabled low latency, but was constrained by limited computational resources, power consumption, and cost. On the other hand, cloud-based processing provides high computational performance but introduces latency and variability in communications.

To address these challenges, SoftBank is developing an MEC (Multi-access Edge Computing) environment based on AI-RAN, which integrates AI with RAN (Radio Access Network). By aggregating vast amounts of data from sensors and cameras and offloading VLM processing to MEC located near base stations, robots can transmit video and audio data with ultra-low-latency and immediately receive the analysis results. Compared to cloud processing, latency is significantly reduced and far more stable.

Furthermore, MEC enables access to large-scale computational resources that cannot be installed on individual robots. Even when accounting for communication time, this allows faster processing than performing inference locally on the robot. As a result, large-scale models can be executed with extremely short response times, enabling Physical AI that achieves both high intelligence and high speed.

In addition, the use of MEC significantly improves system flexibility. Without replacing robot hardware, only the AI—the “brain” of the system—can be continuously updated. This allows robots in the field to continuously evolve with the latest Physical AI capabilities.

3. Collaboration with Yaskawa Electric: Toward Social Implementation of Physical AI

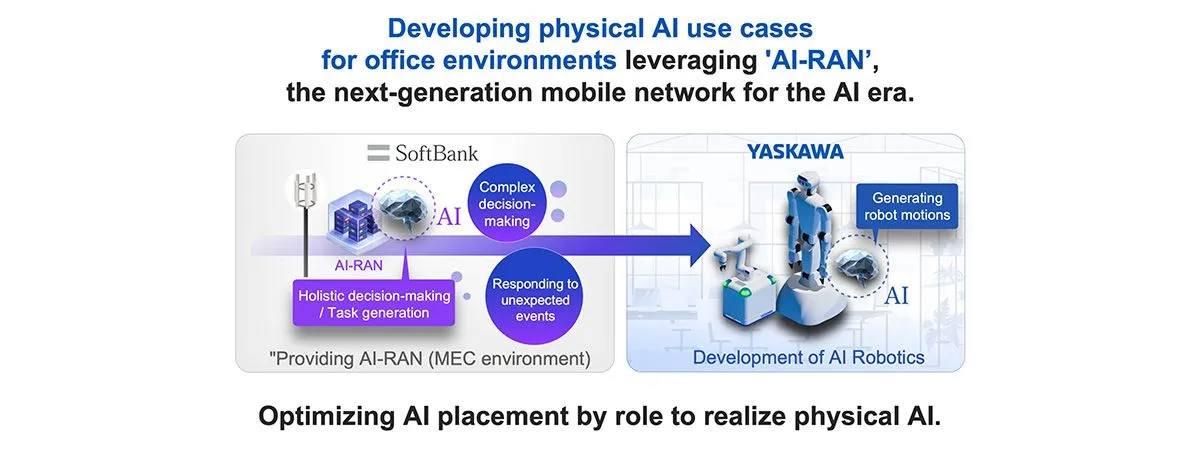

In December 2025, SoftBank announced a collaboration with Yaskawa Electric to advance the social implementation of Physical AI using AI-RAN. This initiative addresses the growing need for advanced automation in environments where people interact, driven by labor shortages and increasing operational complexity.

As the first phase, the two companies jointly developed a use case for office-oriented Physical AI robots that operate on MEC (Multi-access Edge Computing). By integrating and analyzing sensor data from robots with external data within buildings, optimal tasks are generated in real time. These tasks are then translated into specific actions by AI on the robot side, enabling a single robot to perform multiple roles.

By combining Yaskawa Electric’s precise control technologies with SoftBank’s AI-RAN and MEC infrastructure, the collaboration aims to create and implement new automation solutions that integrate AI and communications into robotics.

Related Press Release: SoftBank Corp. and Yaskawa Electric Corporation Begin Collaboration on Social Implementation of "Physical AI" Utilizing AI-RAN(Dec 1, 2025)

4. The Future Enabled by Physical AI

Physical AI extends AI intelligence into real-world actions, enabling advanced autonomous operation of robots and various devices. Its realization requires the integration of multiple advanced technologies, including enhanced AI models, optimization in edge and MEC environments, coordination across distributed locations, and the integration of communications and computing through AI-RAN.

SoftBank is promoting research, development, and demonstration aimed at the social implementation of Physical AI through the construction of AI-native social infrastructure.

Toward a future where Physical AI becomes commonplace, the foundation is already being built.