- 01.Background: The Role of Communication Networks in Real-World Deployment of Physical AI

- 02.Challenges: Fixed Computing and Network Architectures Are Not Sufficient

- 03.Addressing the Challenges: An Offloading Architecture That Integrates and Controls Network and External Computing Resources

- 04.Proof-of-Concept Experiment

- 05.Conclusion

- Blog

- Wireless, Network, Computing

Achieving stable Physical AI through dynamic AI processing offloading and communication network with differentiated connectivity

#AI-RAN #PhysicalAI

Mar 27, 2026

SoftBank Corp.

In this article, as part of a joint verification initiative launched by SoftBank Corp. (“SoftBank”) in collaboration with Ericsson (NASDAQ: ERIC), we introduce an architecture to achieve low-latency and highly reliable control by tightly integrating robots, networks, and computing resources. This is accomplished by combining real-time processing technology leveraging SoftBank’s AI-RAN MEC (Multi-access Edge Computing) platform under development with Ericsson’s advanced 5G (fifth-generation mobile communication system) network capabilities.

Related Press Release: SoftBank Corp. and Ericsson Successfully Demonstrate Low-Latency, High-Reliability Network-enabled Physical AI With AI-RAN(Feb 27, 2026)

1. Background: The Role of Communication Networks in Real-World Deployment of Physical AI

In recent years, growing attention has been given to Physical AI, in which robots accurately perceive their surroundings and flexibly perform decision-making and actions. However, in real-world environments, the AI processing content and computational load required for such flexible decisions and actions vary significantly depending on the situation. In scenarios requiring advanced decision-making, the computing resources installed on the robot alone may not be sufficient.

Against this backdrop, SoftBank and Ericsson are conducting joint research and development on AI-RAN and advancing the validation of Physical AI use cases. By leveraging the technologies of both companies to connect robots with external computing resources, we aim to enable more flexible and advanced decision-making and behavior.

When robots utilize external computing resources in this way, it is necessary to exchange the results of AI processing in real time, making the supporting communication network indispensable.

However, conventional networks have been designed primarily for downlink use cases such as video streaming. In addition, since communication resources are shared among many users and diverse types of traffic coexist over the internet, communication quality is provided on a best-effort basis. As a result, latency and jitter fluctuate depending on network congestion.

In contrast, networks for the AI era require real-time performance. In particular, it is important to ensure uplink communication from devices (e.g., sensor data), guarantee latency bounds, and maintain stable connectivity even during mobility. For example, in physical AI, robots operate by repeatedly executing in real time the cycle of “see (perceive) → think (infer) → act (control).”

Therefore, when using external computing resources, if the communication network experiences delays or interruptions—due to an increase in the number of connected devices or the generation of large volumes of data traffic—the “see → think → act” cycle may be disrupted, potentially leading to delays or instability in operation.

In this way, the communication network plays a critically important role in physical AI.

2. Challenges: Fixed Computing and Network Architectures Are Not Sufficient

In real-world environments, the required AI processing and computational load change dynamically depending on the situation. In addition, when utilizing external computing resources such as the MEC infrastructure of AI-RAN, not only the computational load but also communication latency and throughput may vary, and in some cases the network may become unstable.

For example, in simple environments, processing can be completed using the robot’s onboard computing resources. However, in crowded spaces or situations where obstacles increase, the load for image recognition and path planning may rise sharply, and more advanced decision-making and actions are required. In such cases, offloading processing to external computing resources enables the use of more advanced AI models for recognition and decision-making that would be difficult to execute on the robot alone.

On the other hand, the use of such external computing resources assumes that processing results are exchanged over the network. Therefore, if communication quality degrades due to congestion or other factors, end-to-end latency may increase. Furthermore, since robots are mobile, they are not always in environments with consistently high communication quality.

Thus, when offloading AI processing to external computing resources via the network, it is important not only to ensure low-latency and highly reliable communication, but also to control the robot, the network, and the computing resources in an integrated and dynamic manner.

In other words, an architecture capable of making integrated decisions about:

・Where and how to execute processing

・Which communications workloads to prioritize

is required.

3. Addressing the Challenges: An Offloading Architecture That Integrates and Controls Network and External Computing Resources

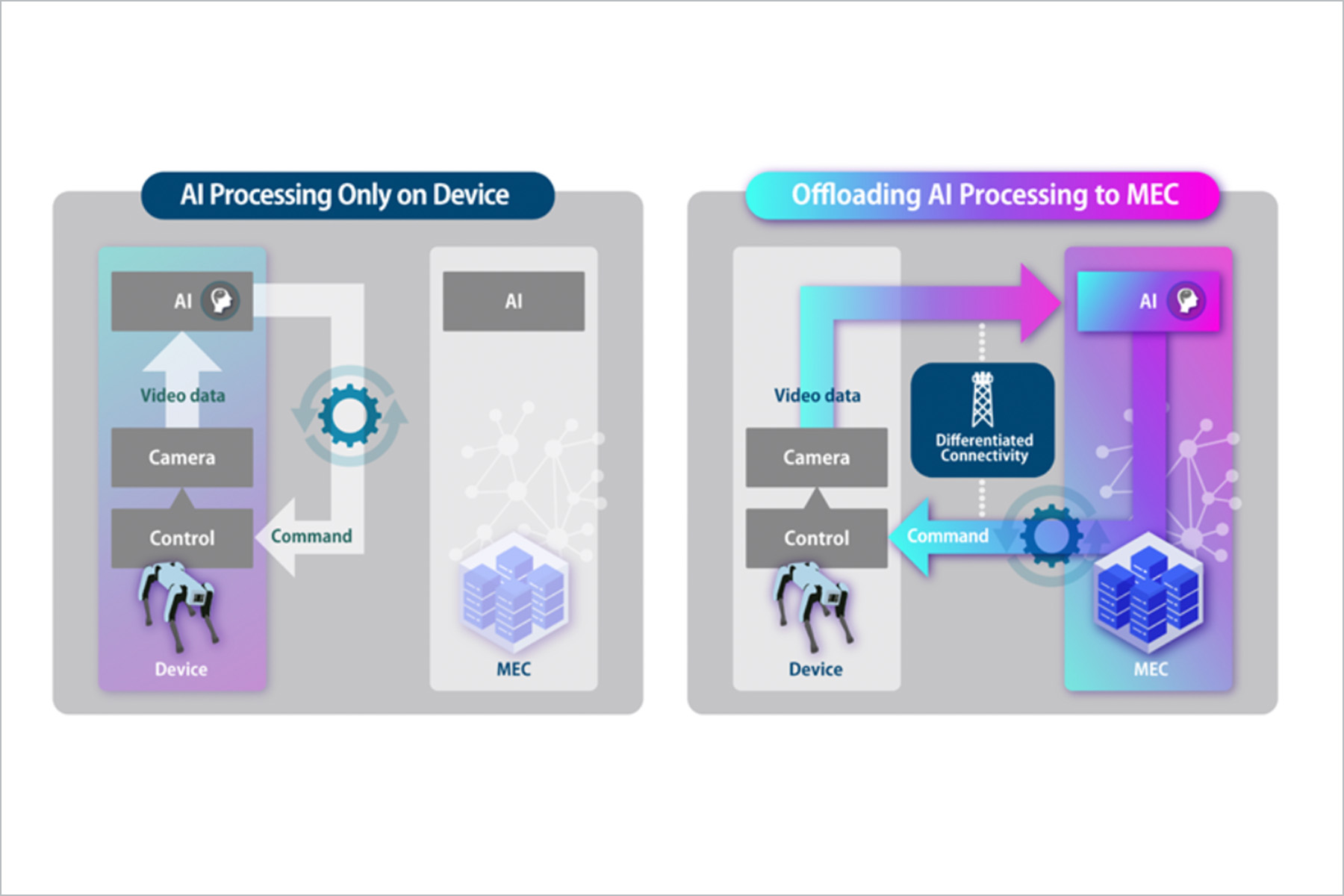

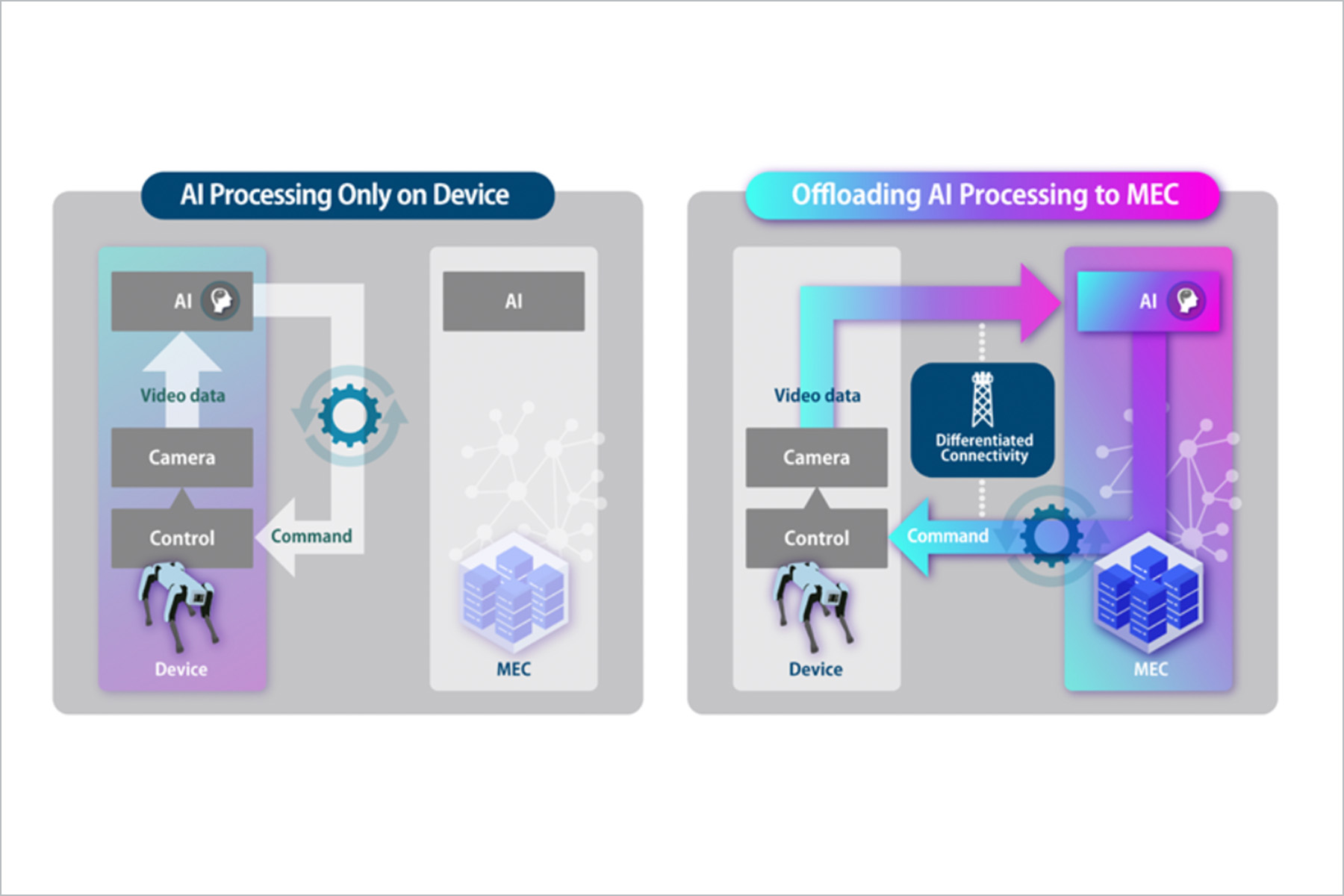

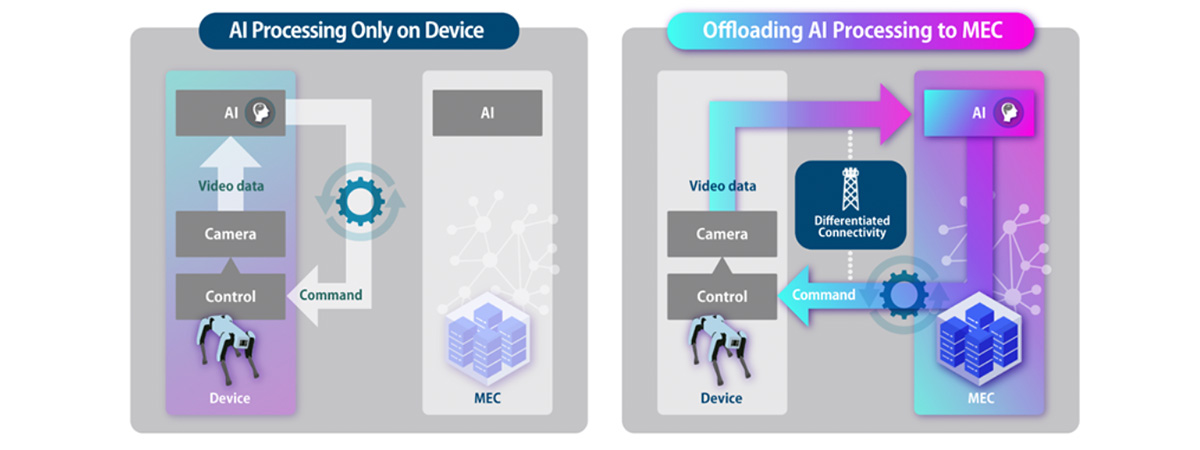

Figure 1. Architecture Overview

To address these challenges, we constructed an AI processing offloading architecture that tightly integrates and controls robots, networks, and external computing resources. This architecture combines SoftBank’s AI-RAN MEC-based real-time processing technology under development with Ericsson’s Differentiated Connectivity*1 capabilities.

This offloading architecture enables optimal robot control by dynamically switching where AI processing is executed—either on the robot itself or on external computing resources such as an MEC platform—based on factors such as available computational resources, communication quality, latency, and task complexity.

Furthermore, on the communication side, it provides differentiated connectivity—including network slicing and traffic prioritization—based on diverse application requirements such as latency, throughput, and reliability. This enables optimization of the network.

*1: Differentiated Connectivity refers to the capability to ensure stable communication performance—such as bandwidth and latency—through functions including network slicing implemented in the 5G SA core and 5G RAN software.

4. Proof-of-Concept Experiment

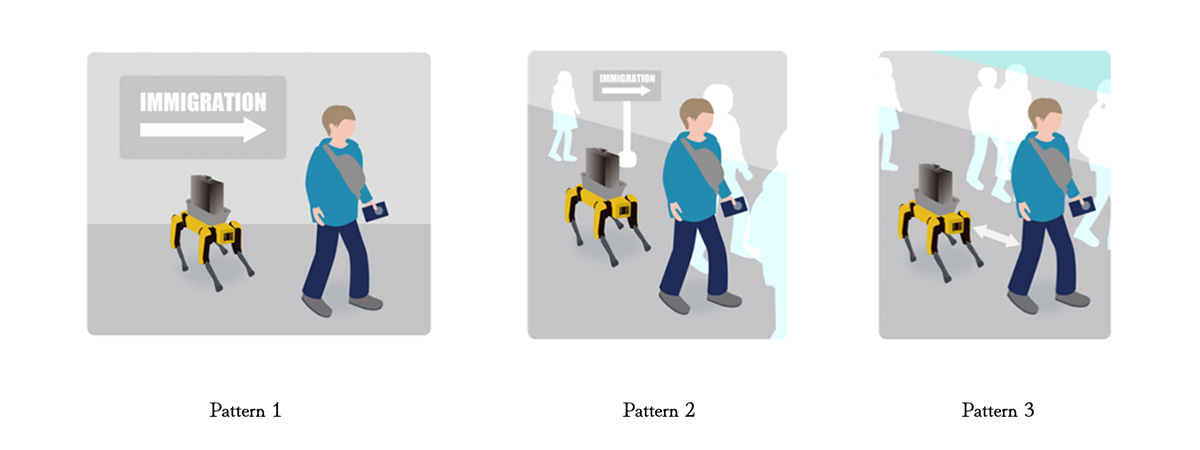

We conducted experiments assuming use cases such as: “A robot that follows users inside an airport and assists with luggage transportation”, “A security robot that follows suspicious individuals”.

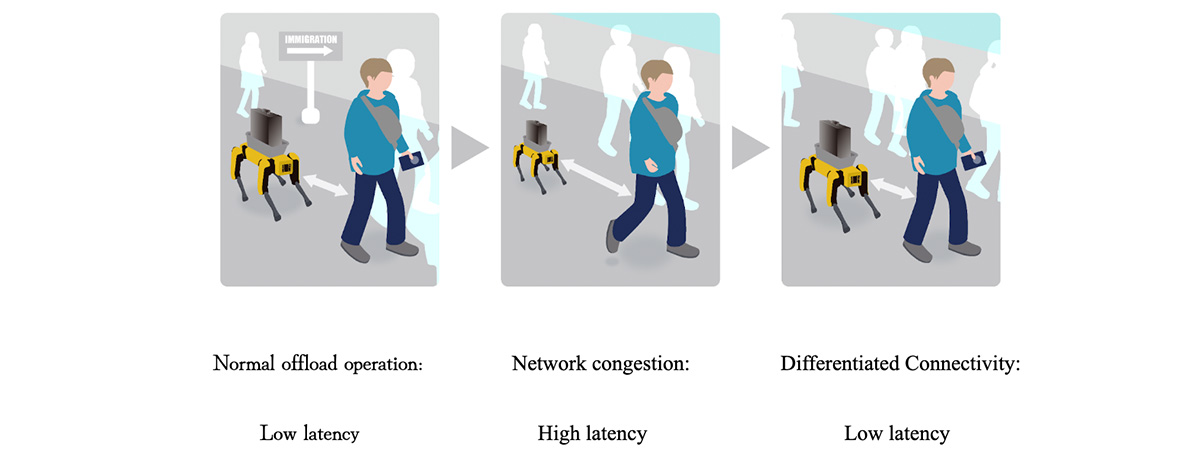

Figure 2. Overall image of the PoC

Pattern 1: AI processing is completed on the robot alone

In situations with few people and a simple environment, AI processing can be executed using only the robot’s onboard computing resources (CPU/GPU), and end-to-end latency can be minimized. On the other hand, the robot’s CPU/GPU usage becomes high, leading to increased power consumption.

Figure 3. Processing performed solely on the robot

Pattern 2: AI processing is offloaded to the MEC platform

As the density of people increases and the environment becomes more complex, more flexible decision-making and actions are required from the robot. In such situations, more advanced AI is needed, and AI processing is offloaded to the MEC platform. As a result, the robot can operate more flexibly than in standalone mode, and the usage of its onboard computing resources is reduced.

Figure 4. Processing in offload mode

Pattern 3: Intervention with Differentiated Connectivity

As shown in the figure below, while operating in offload mode, network congestion may increase—for example, due to an increase in the number of users—resulting in higher network latency. In such cases, Differentiated Connectivity, including network slicing, is applied to prioritize and control the robot’s traffic. As a result, we can observe a decrease in the end-to-end latency and an improvement in the application performance.

Figure 5. Intervention with Differentiated Connectivity

The system consists of a Unitree Go2 robot equipped with Jetson, a GPU-equipped MEC platform (external computing resource), a 5G network supporting Differentiated Connectivity, various AI models such as CNN (YOLO) and LLM, and multiple communication methods including ROS2, MQTT, and HTTP. The decisions for offloading and for intervention with Differentiated Connectivity are made through a mechanism that monitors multiple metrics—such as CPU/GPU usage, communication throughput, and end-to-end processing latency—and comprehensively evaluates them to make decisions.

As a result of the experiments, we confirmed the following two points:

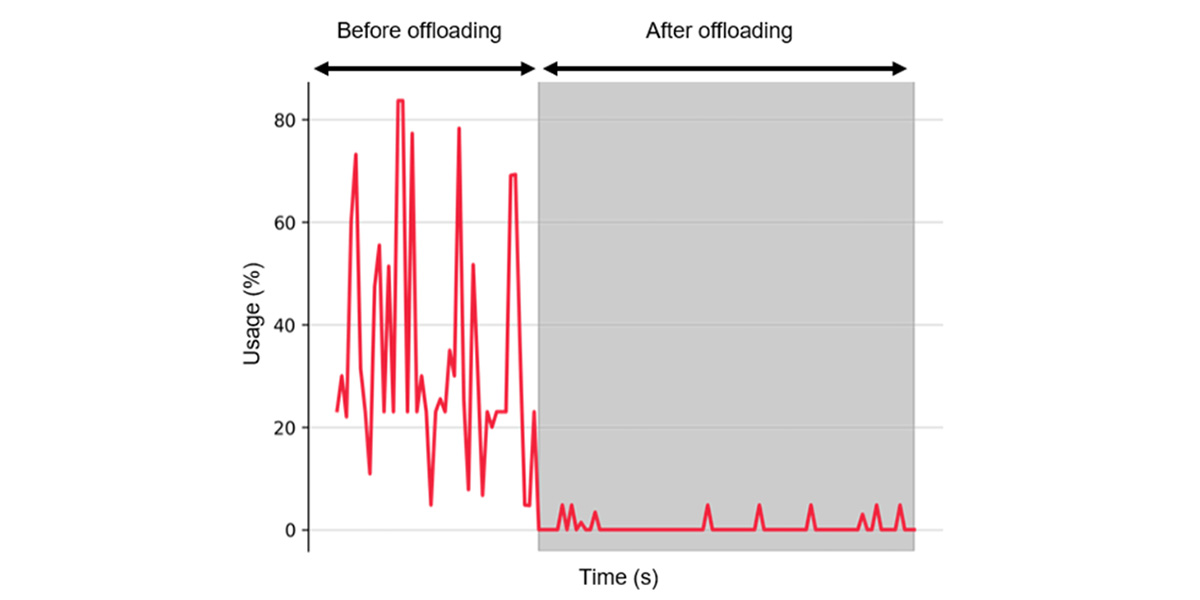

1. In the experiments for Pattern 1 and Pattern 2, as shown in the graph of the robot-side GPU usage below, the GPU usage on the robot side significantly decreased before (Pattern 1) and after (Pattern 2) offloading.

Figure 6. Robot-side GPU usage

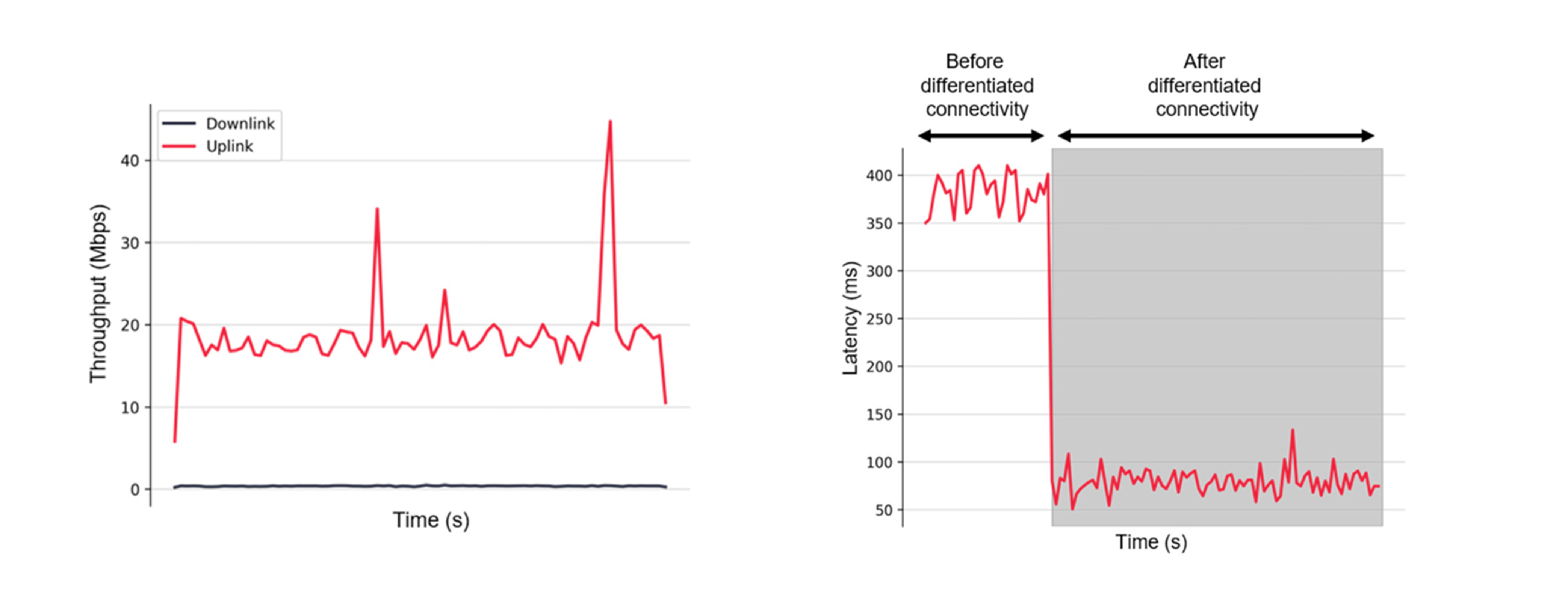

2. In the experiment for Pattern 3, when the uplink throughput decreased (in the last part of the throughput graph below: from approximately 20 Mbps to below 10 Mbps), the robot’s end-to-end latency (as shown in the corresponding latency graph below) increased significantly. After the intervention with Differentiated Connectivity, the latency decreased significantly (shortened from approximately 400 ms to 100 ms).

Figure 7. (Left) Robot communication throughput (before offloading), (Right) Robot end-to-end latency

In real-world environments, these three patterns are not independent but switch continuously according to environmental and communication conditions. In addition to the quantitative experiments described above, we also conducted similar qualitative experiments. For example, although the computational resource graph shown above is an example using GPU, similar trends were observed in inference experiments using CPU. Furthermore, when conducting experiments using isolated Wi-Fi in a non-interference environment instead of 5G, similar trends were observed in changes in computational resource usage and differences in latency depending on the time of day.

Based on the above experimental results, we demonstrated that an offloading architecture that integrally controls computing resources and communication resources—particularly communication networks capable of Differentiated Connectivity—is important and effective for achieving stable Physical AI.

5. Conclusion

In this demonstration, we confirmed that stable operation of physical AI can be achieved by combining dynamic offloading of AI processing with network optimization through differentiated connectivity.

In particular, we experimentally demonstrated that, in response to increased computational load due to environmental changes, offloading to the MEC platform can significantly reduce GPU utilization on the robot side. We also showed that even under network congestion, applying priority control through network slicing can significantly improve end-to-end latency.

To accelerate the social implementation of physical AI, it is essential not only to advance AI models but also to integrate the communication and computing infrastructure that supports them. Based on the insights gained from this demonstration and related initiatives, SoftBank and Ericsson aim to realize next-generation networks required for the physical AI era.