- 01.Key Functions Required for the AI-RAN Orchestrator

- 02.Challenges in the AI-RAN Orchestrator

- 03.Mechanism of the Dynamic Scoring Framework

- 04.Resource Optimization in Multi-Cluster Environments Using the Dynamic Scoring Framework

- 05.Dynamic Scoring Framework Guides the Optimal Placement of AI and RAN Workloads

- Blog

- Wireless, Network, Computing

Mechanism of the AITRAS Orchestrator for Enabling AI-RAN: Resource Optimization Using a Dynamic Scoring Framework

#AI-RAN #AITRAS

Mar 05, 2026

SoftBank Corp.

SoftBank is working toward the realization of AI-RAN, which aims to achieve both the advancement of telecommunications infrastructure and the acceleration of AI utilization. AI-RAN enables flexible execution and control of both AI workloads and RAN workloads on a shared infrastructure. As a member of the AI-RAN Alliance

, an industry consortium focused on the infrastructure and software architectures required to realize AI-RAN, SoftBank has been advancing technical studies and implementations from the perspective of a mobile network operator (MNO).

In this article, we provide a detailed explanation of the role of the AI-RAN Orchestrator and the mechanism of its core component, the Dynamic Scoring Framework.

1. Key Functions Required for the AI-RAN Orchestrator

In AI-RAN, computational resources for running RAN and AI workloads are deployed at edge sites and data centers. These resources are organized into clusters, forming a nationwide multi-cluster environment that integrates all clusters across the network.

In this context, the AI-RAN Orchestrator is responsible for the following roles:

・Allocating available resources to AI and RAN domain orchestrators (DSOs, Domain-Specific Orchestrators), distributing resources across the entire infrastructure so that each DSO can operate at its full potential.[2][3]

・The AI-RAN Orchestrator directly accepts workloads, allocates appropriate resources, and executes them.

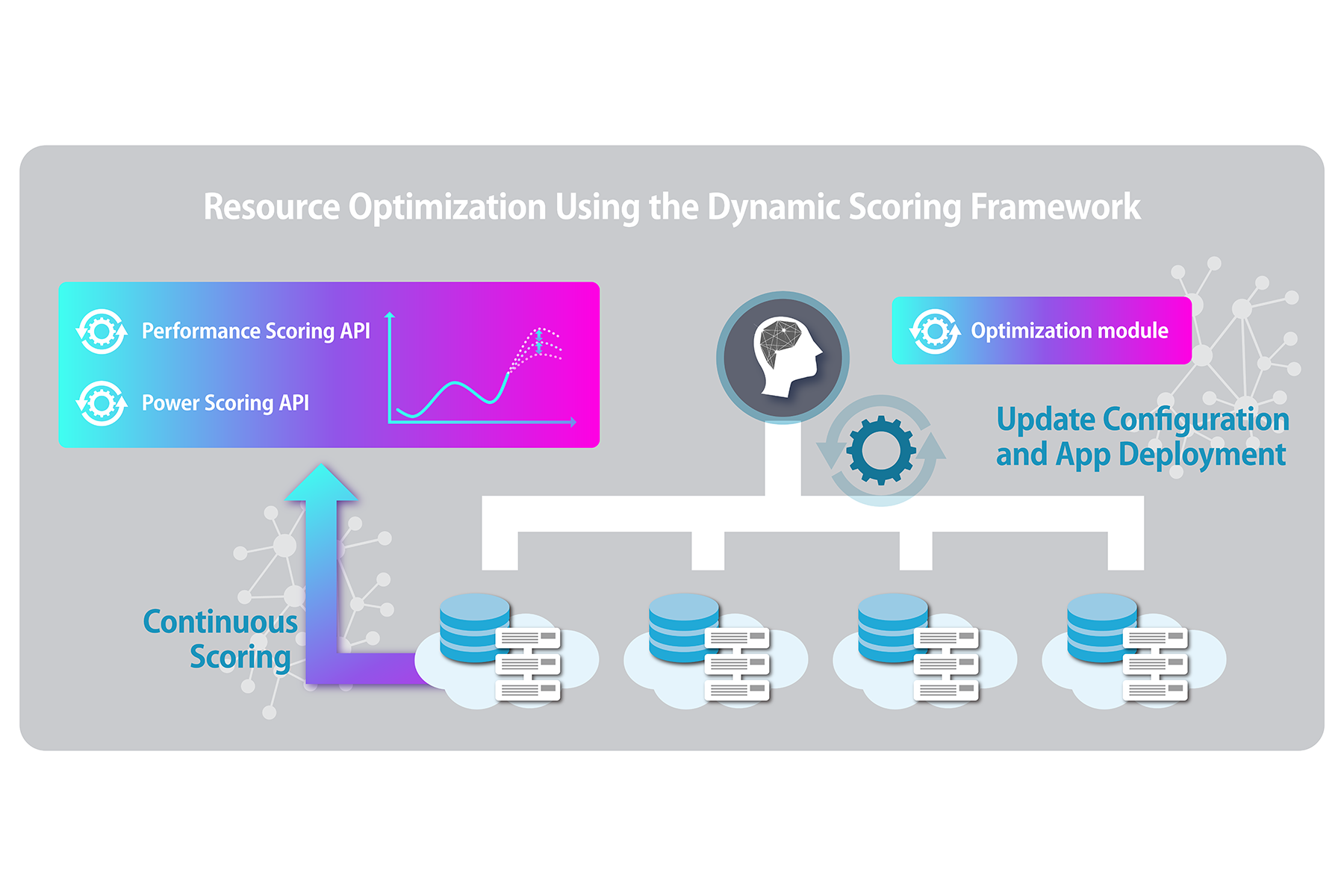

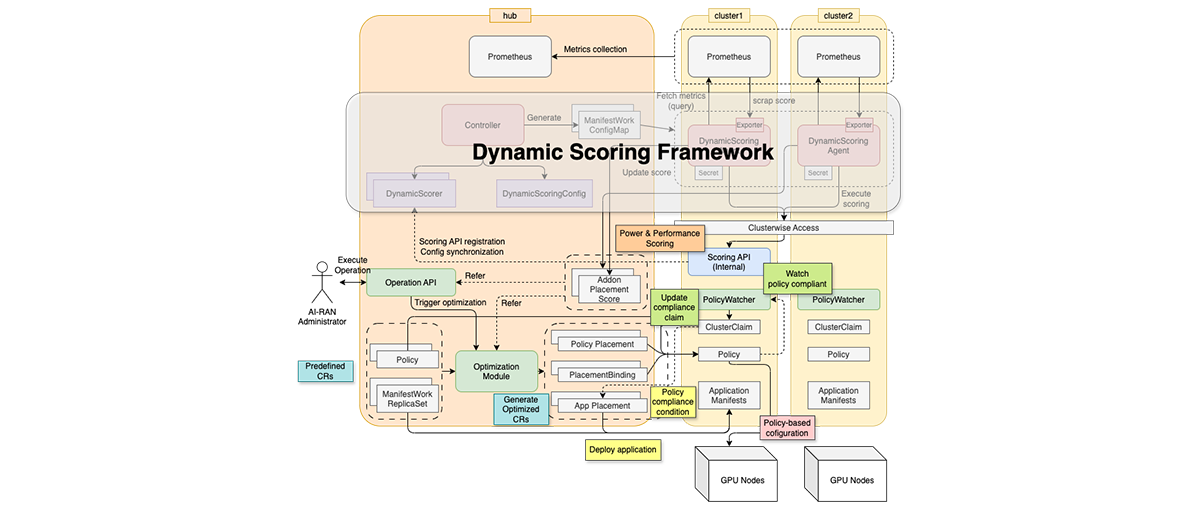

The following figure illustrates the reference system architecture of the orchestrator designed to realize these capabilities.[4]

Figure 1. Reference System Architecture of the AI-RAN Orchestrator

The system architecture consists of the following functional blocks:

1. Multi-Cluster Management: Manages the lifecycle of clusters, including registration and updates.

2. Metrics Collection: Collects resource status across managed clusters.

3. Dynamic Scoring: Analyzes and evaluates the collected resource data.

4. Multi-Cluster Scheduling: Determines an optimal resource allocation plan based on the evaluation results.

5. Resource Arrangement: Applies cluster configuration changes and manages application lifecycles according to the allocation plan.

In the AITRAS orchestrator currently being developed in-house by SoftBank, these functional blocks are built by leveraging Red Hat OpenShift components where appropriate, while internally developing the necessary additional components. For example, Red Hat Advanced Cluster Management for Kubernetes is used for Multi-Cluster Management and Metrics Collection. Red Hat Advanced Cluster Management for Kubernetes is a tool designed to streamline resource management in multi-cluster environments. It incorporates functionalities from the open-source project Open Cluster Management (OCM), enabling efficient and simplified management of multi-cluster infrastructures.

2. Challenges in the AI-RAN Orchestrator

While OCM provides a solid foundation for multi-cluster management, realizing the AI-RAN Orchestrator requires seamless integration of Dynamic Scoring, Multi-Cluster Scheduling, and Resource Arrangement.

For example, when orchestrating the deployment of multiple AI models on the AI-RAN infrastructure, several requirements arise:

・Resource planning should be optimized from different perspectives depending on the state of the multi-cluster environment.

- When sufficient resources are available, deployments should be optimized to maximize performance.

- Conversely, when power supply is constrained, workloads must still be executed while limiting resource consumption.

・Resource planning must flexibly reflect the dynamic resource status of each cluster.

- Planning should consider not only the current resource state but also predictions of future resource availability.

- Since the required evaluation depends on the optimization logic, multiple evaluation strategies should be supported and executable.

- Evaluations should leverage metrics from each cluster locally, while aggregating the results centrally to enable globally optimal decision-making.

・The formulated resource plan must be executed in an appropriate and well-defined sequence.

- When application deployment involves infrastructure configuration changes, state transitions must be carefully orchestrated—for example, ensuring that infrastructure updates are completed and nodes are operating correctly before deploying applications to them.

These diverse requirements highlight the complexity of designing and implementing the AI-RAN Orchestrator.

To address these challenges, we implemented the Dynamic Scoring Framework as an open-source component within OCM.

This framework enables the integration of proprietary evaluation and optimization logic to realize Multi-Cluster Scheduling, as well as Resource Arrangement in coordination with other OCM components. By providing a mechanism that allows flexible extension and integration of these capabilities, we have contributed the framework to the community as an open-source project.[5]

The Dynamic Scoring Framework simplifies resource optimization in multi-cluster environments, enabling efficient resource allocation between AI and RAN workloads.

3. Mechanism of the Dynamic Scoring Framework

The Dynamic Scoring Framework is a framework designed to efficiently evaluate resources in a multi-cluster environment.

In designing and implementing this framework, we focused on the following key concepts:

・Modular Architecture for Evaluation Logic Development: A modular design that allows evaluation logic developers to focus solely on implementing their logic.

- Definition of a common interface for evaluation logic, separating it as independent modules.

- An interface that declaratively specifies how the evaluation logic should be used.

- By defining generalized interfaces, the framework can manage diverse evaluation criteria such as application performance, power consumption, and resource utilization.

・Distributed Execution and Centralized Decision-Making: Distribution of evaluation agents and central aggregation for decision-making.

- Agents deployed in each cluster execute scoring based on evaluation logic using local cluster resource metrics.

- Evaluation results are aggregated in a hub cluster in a format suitable for decision-making.

・Minimizing Impact on Workloads: A design ensuring that resource consumption by the evaluation logic itself does not interfere with workload execution.

- By modularizing evaluation logic and executing it using available resources, the impact on workloads is reduced.

- A mechanism to aggregate evaluation execution latency and monitor the performance of the evaluation logic itself.

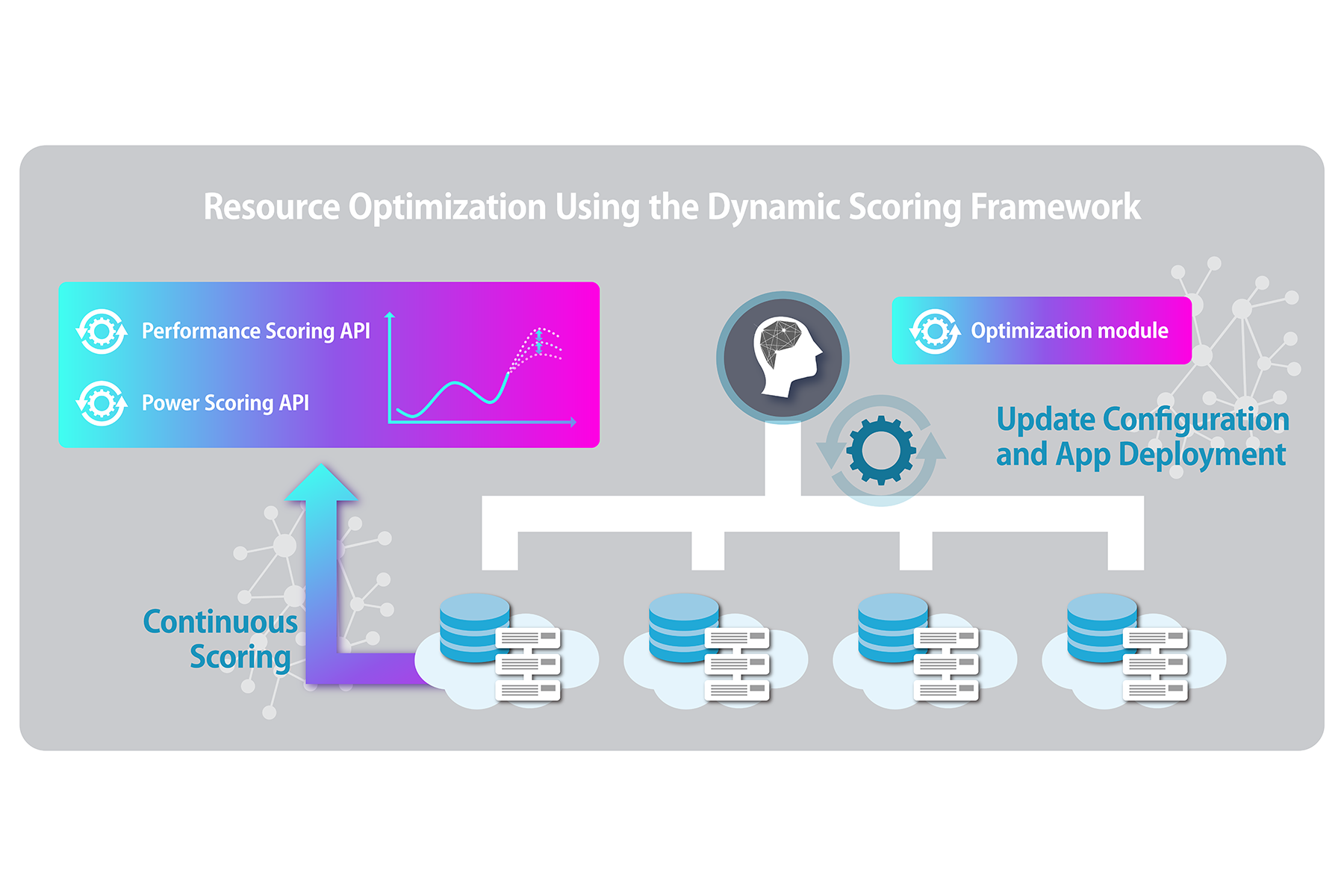

The overall workflow of the Dynamic Scoring Framework is illustrated in the figure below.

Configuration is distributed across managed clusters, where evaluation logic is executed using each cluster’s local metrics. The resulting scores are then aggregated in the hub cluster and utilized for resource allocation decisions.

Figure 2. Operational Overview of the Dynamic Scoring Framework

In the following sections, we explain the details of the Dynamic Scoring Framework.

3.1. Overall Architecture of the Dynamic Scoring Framework

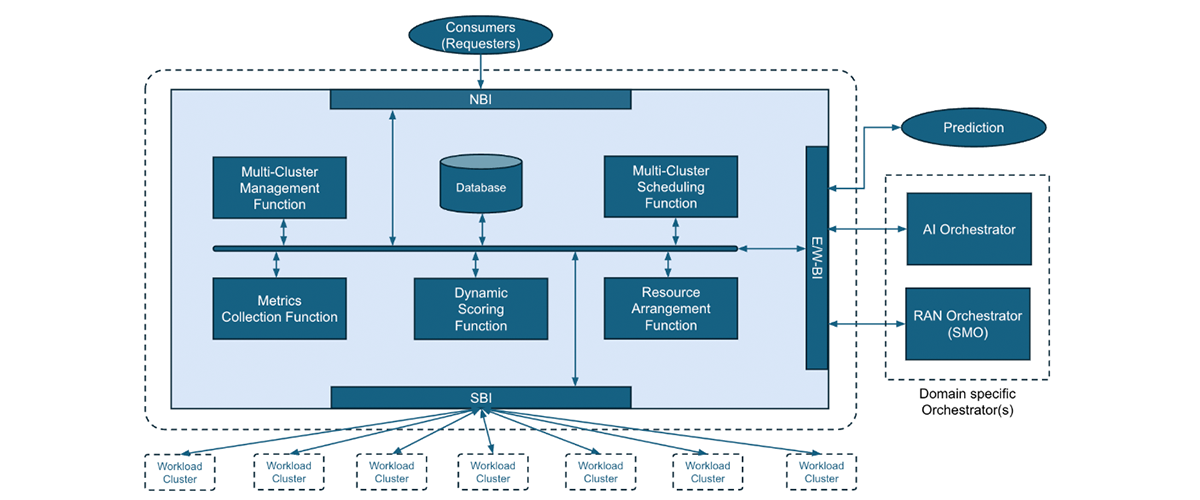

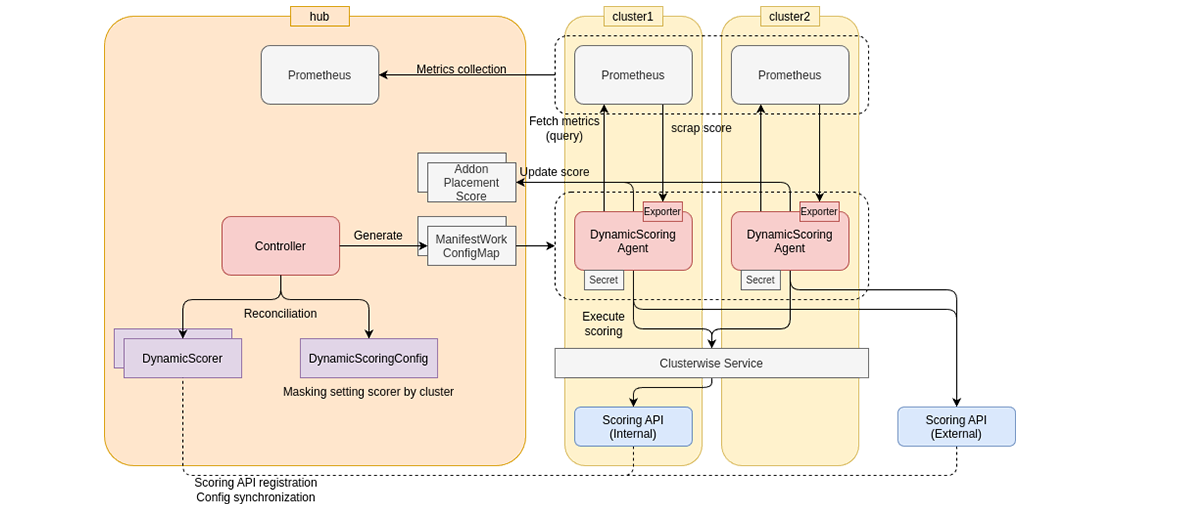

The overall architecture of the Dynamic Scoring Framework is illustrated in the figure below.

Figure 3. Overall Architecture of the Dynamic Scoring Framework

The key components are as follows:

・Scoring API

・DynamicScorer

・DynamicScoringConfig

・DynamicScoringAgent

Detailed specifications are available in the GitHub repository. Here, we briefly describe the role of each component.

3.2. Scoring API

The Scoring API is a component that provides evaluation logic as a Web API.

Dynamically evaluating cluster conditions is an essential process in orchestration. Optimization can only be attempted after evaluation is performed—for example, by assessing predicted outcomes based on total cluster power consumption, or by evaluating cost-effectiveness relative to resource usage based on accelerator power consumption and application throughput.

The Scoring API in the Dynamic Scoring Framework defines a generalized interface for evaluation mechanisms that receive metrics and compute scores based on them.

Specifically, the Scoring API defines a common interface for evaluation modules that take time-series metrics as input and return scores. This modular design allows the evaluation logic to operate independently of other system components.

To effectively utilize the modularized Scoring API, it is necessary to correctly understand the following information:

・Prerequisite information for the input time series

- Data source (type of metrics such as power consumption, resource utilization, throughput, etc.)

- The granularity of the time series to be input

・Evaluation interval: The temporal interval at which the evaluation is executed

・Schema of the score information to be output

The Dynamic Scoring Framework allows the information that specifies how a Scoring API should be executed to be declared in the API’s own config endpoint. In other words, developers implementing a Scoring API can define how their evaluation logic is intended to be invoked. As a result, the Scoring API is called with appropriate inputs aligned with the developer’s intent.

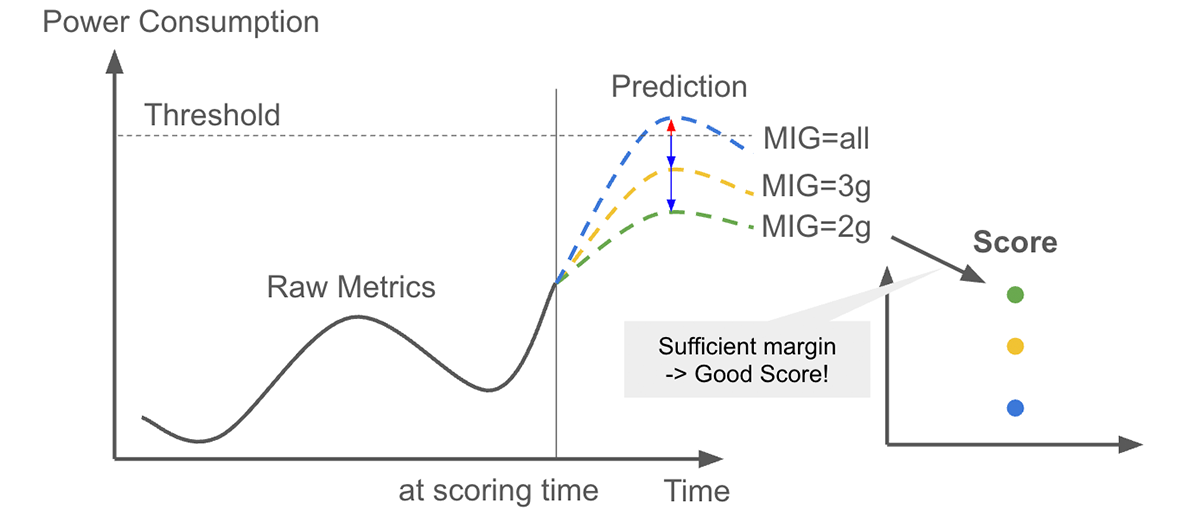

The Scoring API actually developed by SoftBank allows for the pre-evaluation of application deployment based on future power consumption forecasts.

Figure 4. Pre-deployment Evaluation of Application Deployment Based on Power Consumption Prediction

The system predicts cluster power consumption and evaluates, for each GPU configuration, whether future power usage will exceed a defined threshold based on measurements at deployment. This enables the selection of the most suitable GPU configuration for the target application.

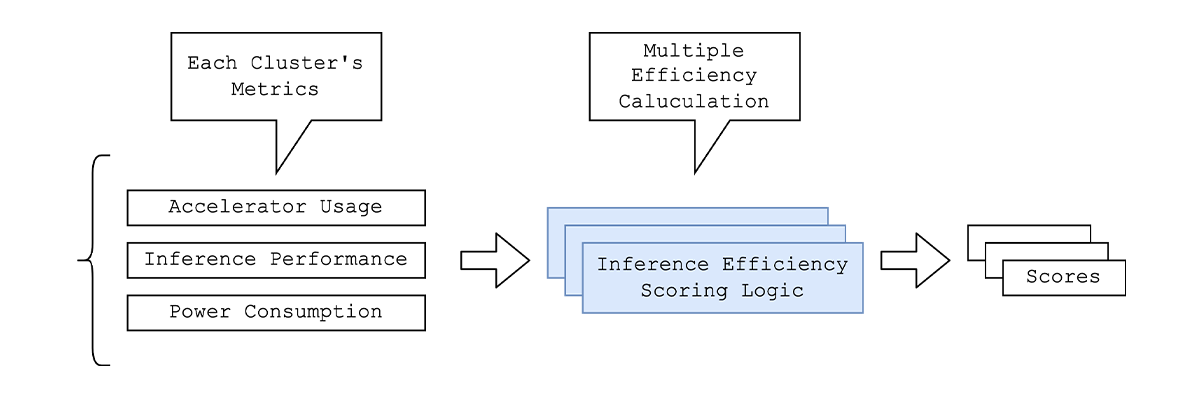

Because the Scoring API is defined as a generalized interface, the framework can support composite evaluations based on multiple metrics, such as cost-effectiveness measured against resource usage and application performance.

Figure 5. Inference Performance Evaluation Based on Multiple Metrics

3.3. DynamicScorer

The DynamicScorer is a Kubernetes custom resource that registers a developed Scoring API with the Dynamic Scoring Framework. It stores the information required to execute the scoring logic.

When the configuration endpoint of a Scoring API is registered in the DynamicScorer, the declared configuration is automatically reflected in the state of the custom resource.

Depending on the use case, infrastructure operators may want to override certain settings, such as the scoring interval. The framework therefore supports:

・Executing scoring entirely based on the configuration endpoint.

・Executing scoring based on operator-overridden settings.

This hybrid configuration model provides flexibility to accommodate different operational requirements.

3.4. DynamicScoringConfig

The DynamicScoringConfig is a Kubernetes resource that controls how registered DynamicScorers are distributed to managed clusters.

For example, if a GPU evaluation logic is registered as a DynamicScorer, there is no need to run it on clusters without GPUs. Distribution rules, including filters such as these, are defined in the DynamicScoringConfig.

When a DynamicScoringConfig is created, the required information is aggregated for each managed cluster based on the defined rules and the registered DynamicScorers. The aggregated configuration is then distributed to each cluster as a ConfigMap.

3.5. DynamicScoringAgent

The DynamicScoringAgent is automatically deployed to each cluster and executes scoring based on local metrics and the configured evaluation logic.

Based on the ConfigMap distributed to the managed cluster, the agent determines which evaluation logic to run and periodically invokes the corresponding Scoring API.

When invoked, it retrieves the required input metrics from its metric collection system (e.g., Prometheus) and executes the evaluation.

The scores generated by the Scoring API can be aggregated to the hub cluster in several ways:

・Exposing them through the agent’s metrics endpoint, storing them in the cluster’s Prometheus, and aggregating them to the hub cluster using external mechanisms such as Multi-Cluster Observability.

・Sending them directly from the agent to the AddOnPlacementScore resource on the hub cluster.

The AddOnPlacementScore is a Custom Resource, defined in OCM, that holds the cluster state evaluation results, and can be used for application deployment and other purposes. The aggregated scores are used to achieve globally optimal resource allocation.

The agent’s metrics endpoint records latency and error counts for each Scoring API call, enabling performance monitoring. Thanks to its modular architecture, the evaluation process can be optimized by scaling or adjusting deployment as needed.

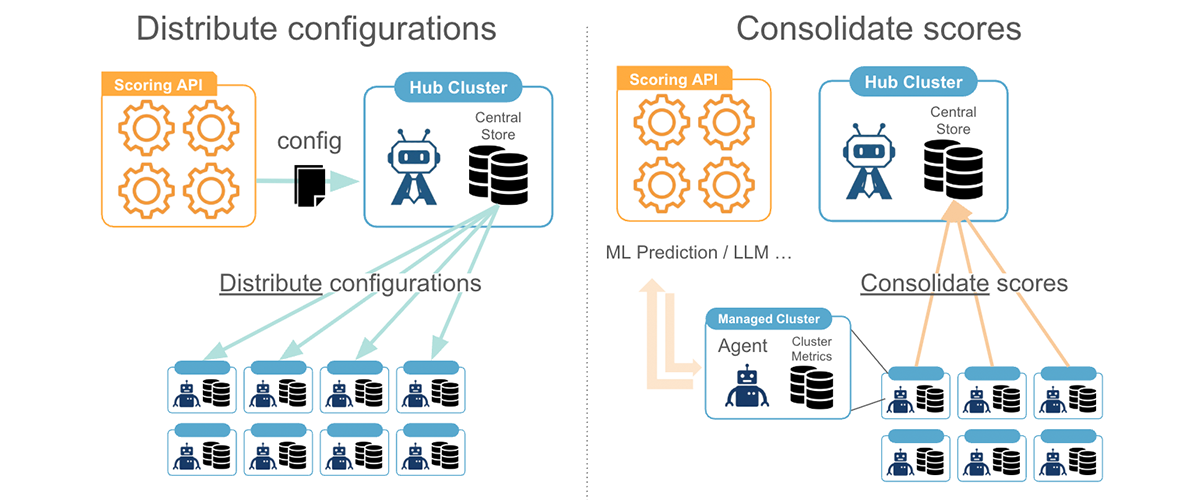

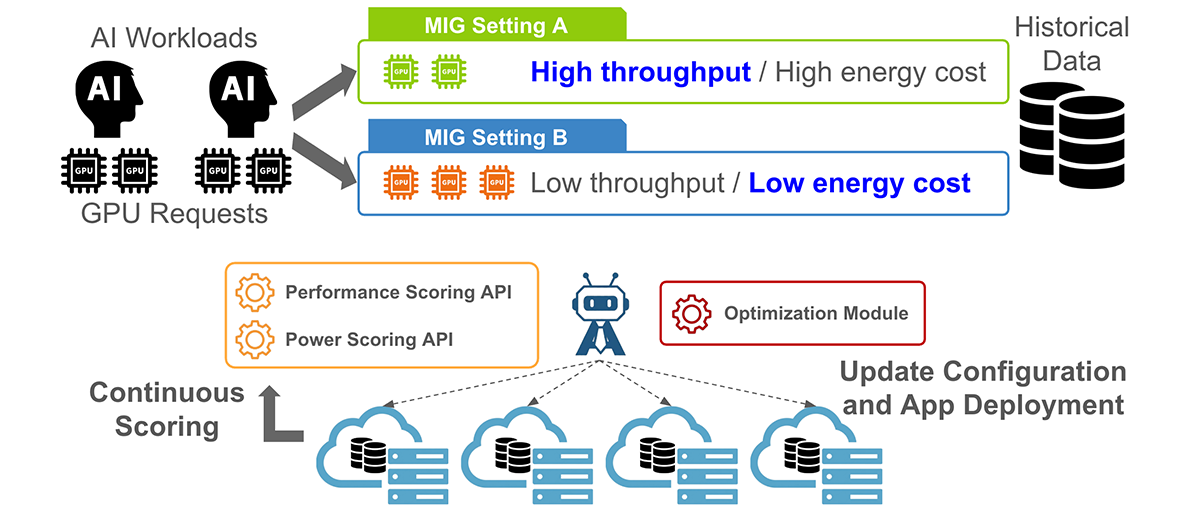

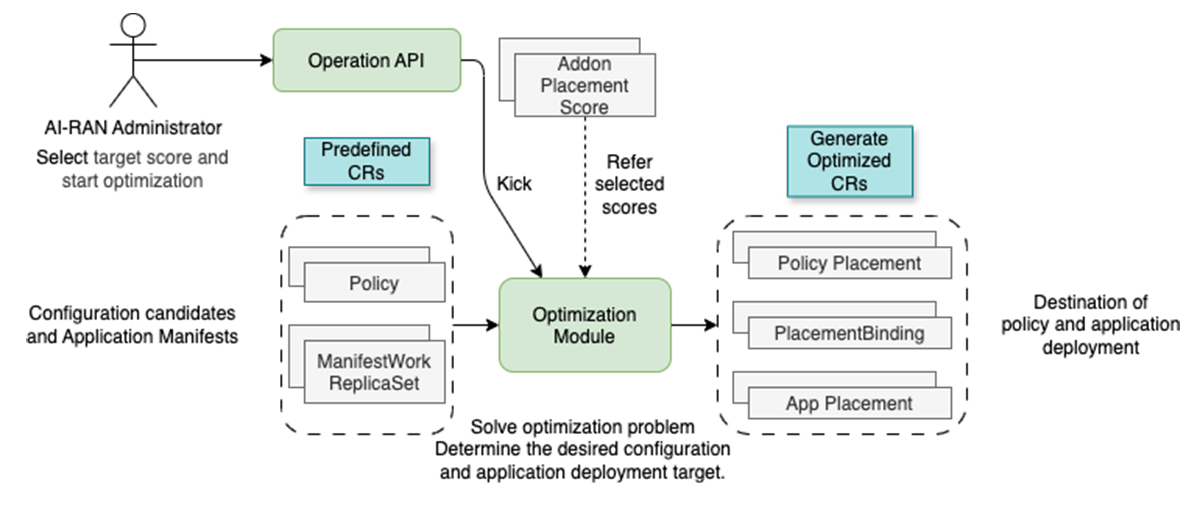

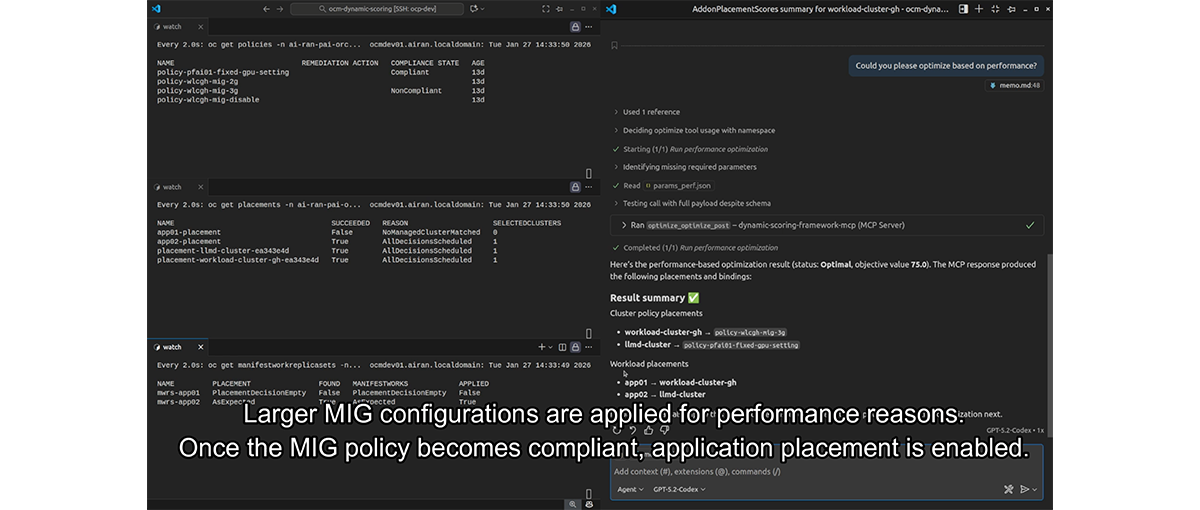

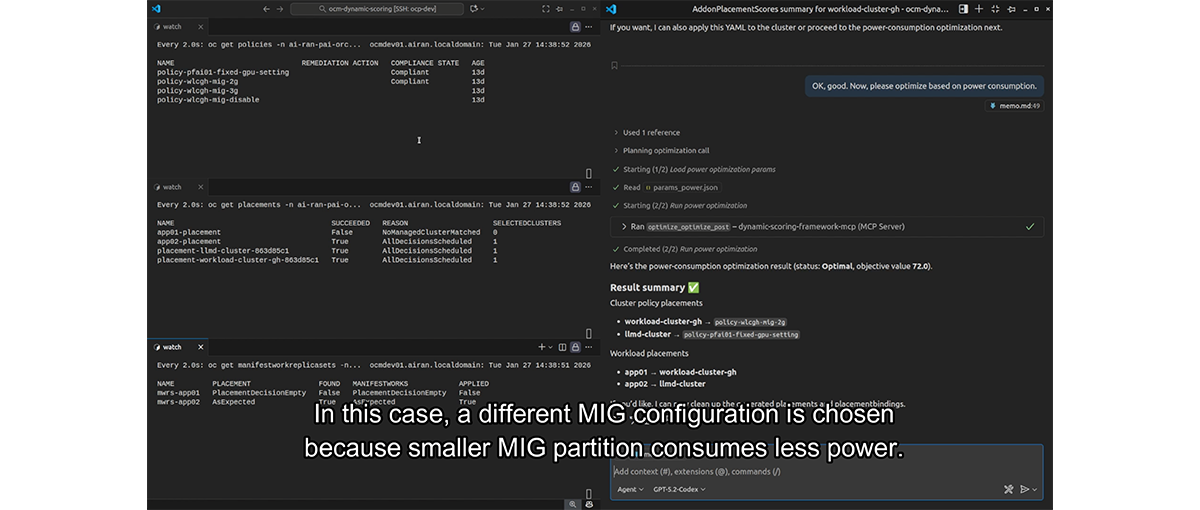

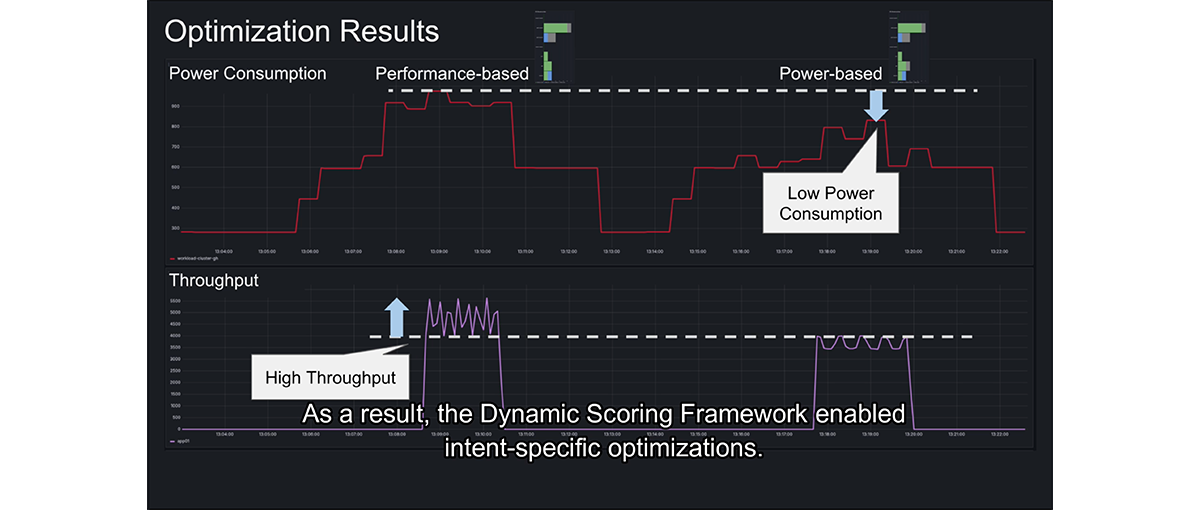

4. Resource Optimization in Multi-Cluster Environments Using the Dynamic Scoring Framework

In this section, we present a configuration example that demonstrates dynamic resource optimization across multiple clusters using the Dynamic Scoring Framework.

In this scenario, application deployment is optimized while adjusting MIG settings on a managed cluster equipped with multiple GPUs.

Figure 6. Application Deployment Scenarios Based on Selected Evaluation

The workload exposes an LLM inference endpoint and requires two GPUs to run. It can be deployed on a cluster with at least two MIG instances; however, the optimal MIG profile varies in terms of throughput and power consumption. In this scenario, we consider dynamically adjusting the MIG partitioning while deploying the application.

We constructed the overall architecture to perform optimization for this kind of situation as follows.

Figure 7. Overall System Architecture Enabling Optimization

With this architecture, optimization can be performed based on the target metrics, enabling application deployment while adjusting MIG settings.

The details of each component are explained below.

4.1. Optimization Module

The custom resources used in this example are as follows:

・Policy: Defines the desired state of cluster resources, such as nodes.

・Placement: Specifies the conditions that target clusters must meet.

・PlacementDecision: Identifies the managed clusters that satisfy the Placement conditions.

・PlacementBinding: Associates a Policy with a Placement, indicating that selected clusters should comply with the policy.

・ManifestWorkReplicaSet: Contains the manifests for deploying the application (e.g., Deployment and Service) along with the Placement specification

- Once a PlacementDecision is made, the application is deployed to the corresponding clusters.

These resources are provided by OCM.

In this configuration, each cluster is evaluated using the Dynamic Scoring Framework through the AddOnPlacementScore resource. Based on the resulting scores, the following resources are generated via a Web API to achieve overall optimization:

・A Placement and PlacementBinding for the Policy that controls MIG settings.

・A Placement for the application manifest (ManifestWorkReplicaSet).

This approach enables optimal application deployment while dynamically adjusting MIG settings on a per-cluster basis.

Figure 8. Generation of Placement Based on Optimization

Furthermore, this implementation allows optimization to be executed by an AI agent, as the API that triggers the optimization is exposed as an MCP-compatible server.

This enables a cooperative architecture in which the AI agent and the orchestrator autonomously optimize multi-cluster operations. In this model:

・Specialized logic, such as a Scoring API based on ML predictions, works together with the Optimization Module to ensure optimality.

・The AI agent interprets key metrics derived from the overall score according to the operator’s intent and triggers the optimization process.

Figure 9. Screenshot of the Optimization Demo According to Different Situations

4.2. Cluster Configuration Management Using the OCM Policy Framework

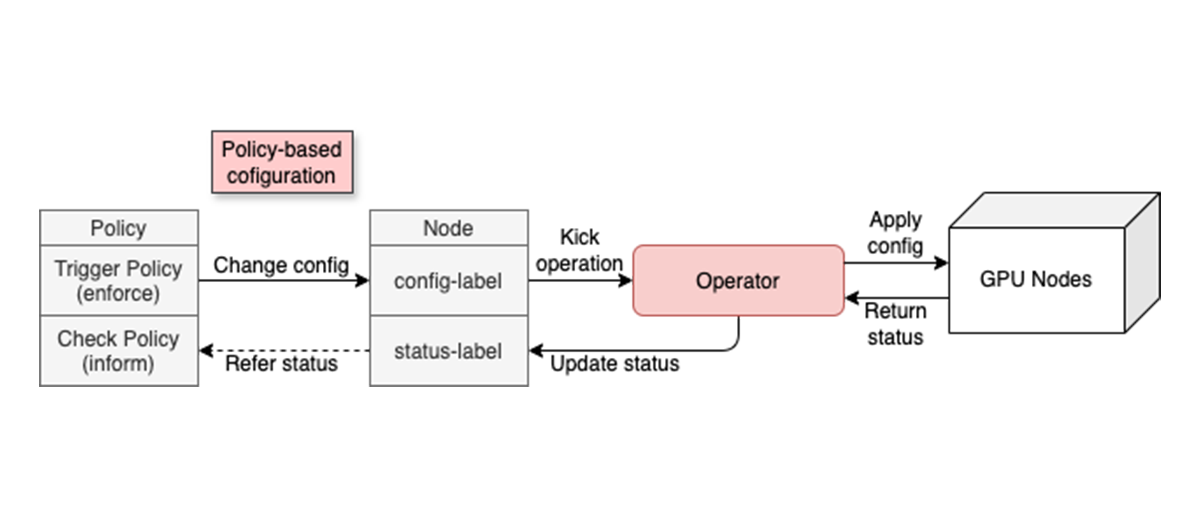

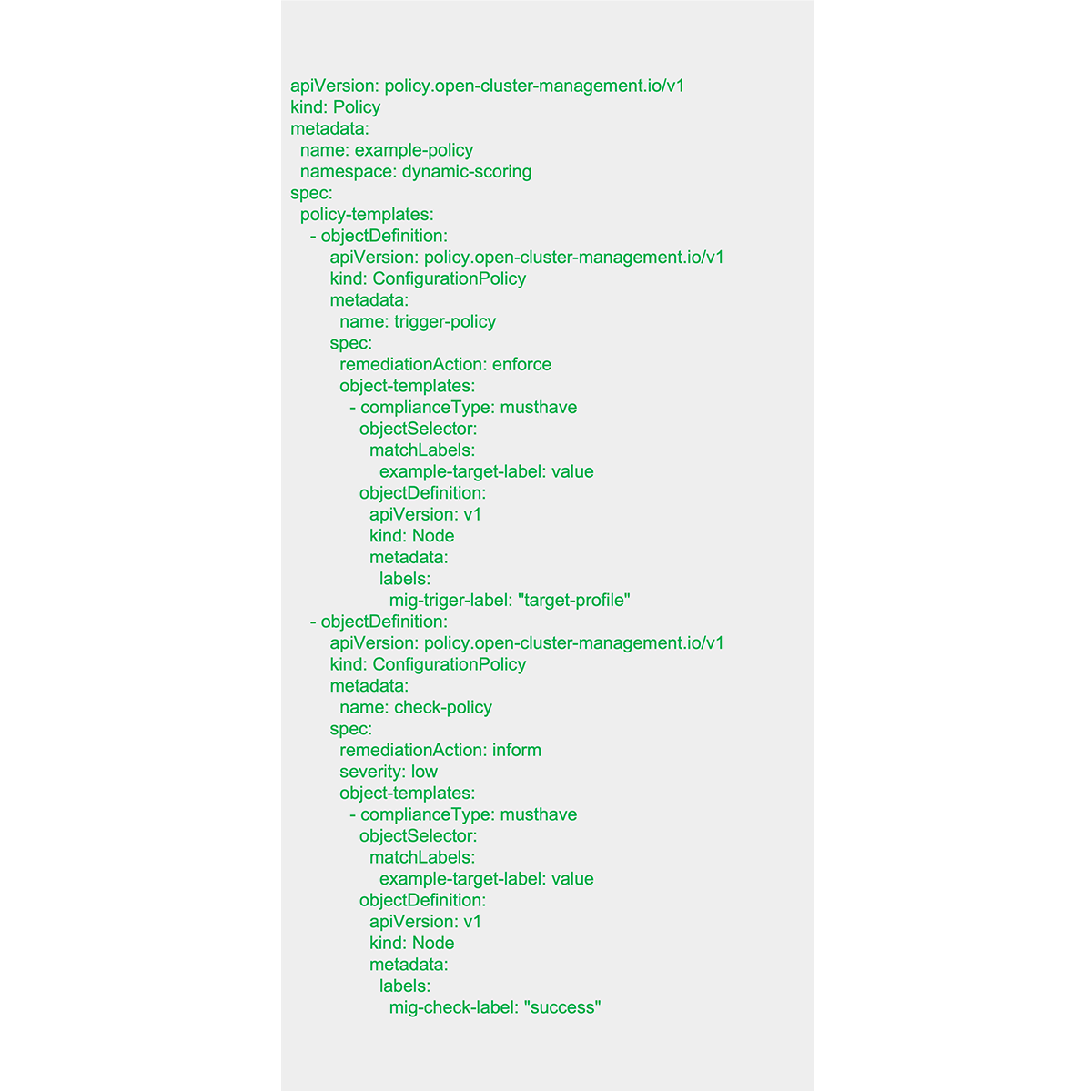

In this setup, we are dynamically changing the MIG settings of each cluster by utilizing the OCM Policy Framework.

The Policy Framework is an OCM mechanism that allows you to define the desired state (Policy) for Kubernetes resources and associate it with a cluster (PlacementBinding) to set (or inform) the resources within the cluster to the desired state.

In this example, we created a Policy that becomes compliant when the configuration change is performed correctly. This is achieved by defining a policy that enforces the node labeling required to request a configuration change to the GPU operator while informing a state where the configuration change is in progress as a violation.

By applying this with a PlacementBinding and associating it with the cluster, we are realizing the MIG configuration change.

Figure 10. Node Configuration Changes Using Policy

As a concrete example, applying a Policy like the one shown below triggers a MIG configuration change on cluster nodes labeled with example-target-label=value and becomes compliant once the configuration change is completed.

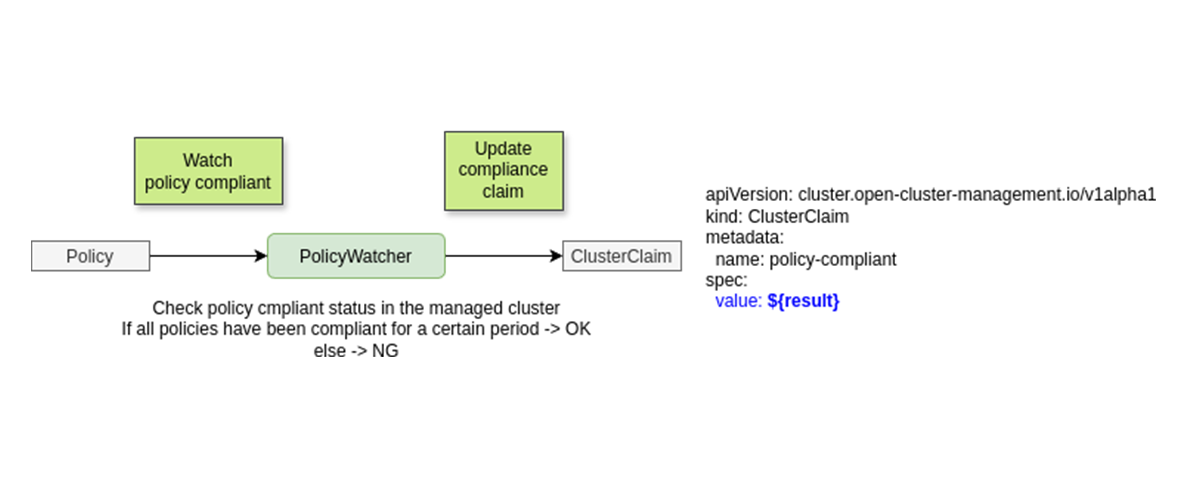

4.3. Updating ClusterClaims with PolicyWatcher

The PolicyWatcher monitors Policy resources within each cluster, observes their state, and updates the corresponding ClusterClaim.

Although updating the ClusterClaim based on Policy compliance can be achieved using the Policy Framework alone, in this example we require the Policy to remain in a compliant and stable state for a certain period before deploying the application. To achieve this, we deploy a lightweight Pod that verifies the stability condition before updating the ClusterClaim.

Figure 11. Operational Overview of the PolicyWatcher

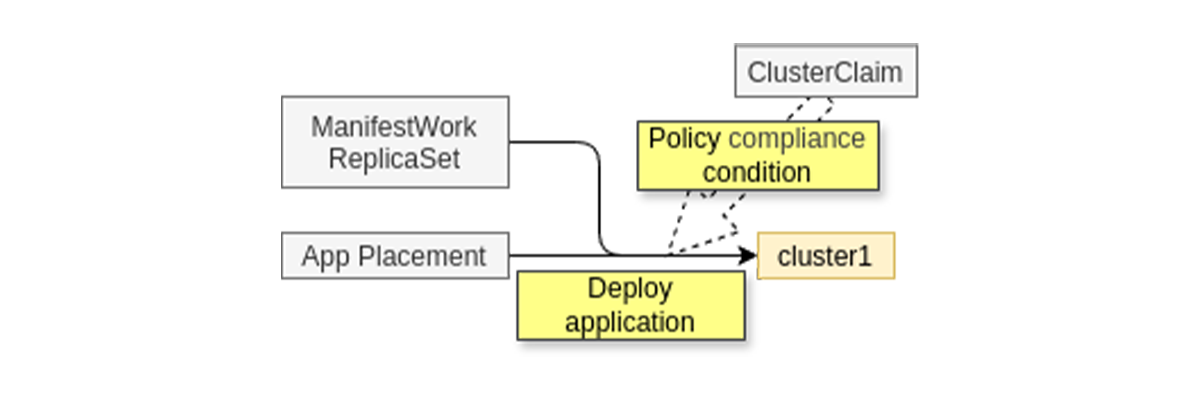

Placement for workload deployment is generated to select a policy-compliant ClusterClaim, allowing applications to be deployed to clusters with Policies applied.

Figure 12. Application Deployment to the Cluster After Policy Application

4.4. Resource Optimization of the Scoring API Itself

Another advanced use case is optimizing the resource consumption of the Scoring API itself. For example, when the Scoring API is deployed in the multi-cluster environment, it is desirable for the evaluation logic to run only on spare resources, as the infrastructure should be prioritized for workload execution.

The Dynamic Scoring Framework is effective in such scenarios.

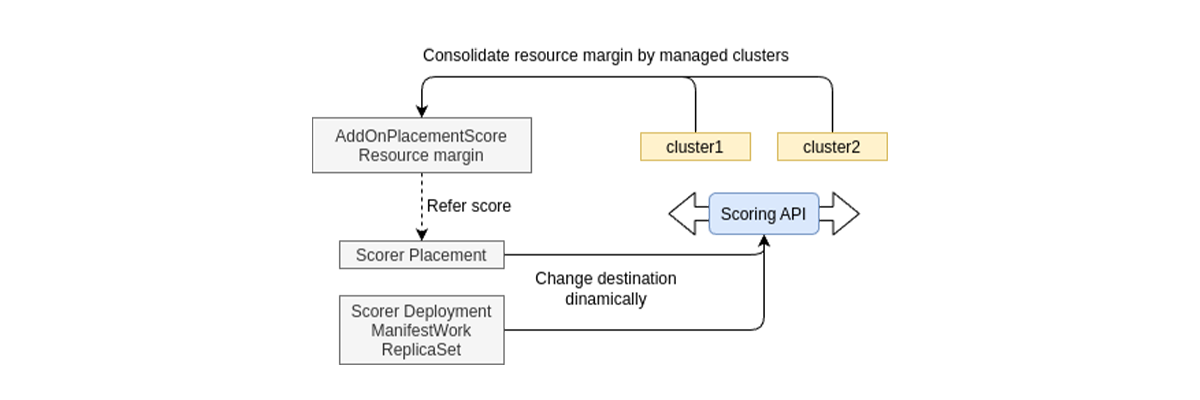

For instance, by implementing a Scoring API that calculates a score based on the amount of available spare resources in each cluster and registering it with the framework, the AddOnPlacementScore is automatically updated.

In this state, by creating:

・A ManifestWorkReplicaSet to deploy the Scoring API within the cluster.

・A Placement that refers to the score according to the amount of available resources. (AddOnPlacementScore)

The Dynamic Scoring Framework can optimize the resource allocation of the Scoring API itself through the following process:

・The evaluation logic registered in the framework calculates a score based on available resources and automatically updates the AddOnPlacementScore.

・A Placement that references the AddOnPlacementScore selects target clusters (via PlacementDecision) according to available capacity.

・The Scoring API is deployed through a ManifestWorkReplicaSet so that the evaluation logic runs on clusters with sufficient spare resources.

Figure 13. Dynamic Reallocation of the Scoring API Using AddOnPlacementScore

5. Dynamic Scoring Framework Guides the Optimal Placement of AI and RAN Workloads

This article introduced the AITRAS Orchestrator, which addresses the need for resource optimization in multi-cluster environments in the AI-RAN era, and described its core mechanism, the Dynamic Scoring Framework.

The Dynamic Scoring Framework enables optimal placement and execution of AI and RAN workloads by flexibly incorporating cluster resource status and diverse evaluation logic. Its modular evaluation design, distributed scoring performed by individual clusters, and centralized aggregation for global optimization together enable advanced resource management aligned with the intentions of operators and AI agents.

This content is also introduced in a technical blog by Red Hat. Please refer to it as well.

References

1. SoftBank Corp. and Ericsson achieve interworking between AITRAS Orchestrator and Ericsson Intelligent Automation Platform

2. Integration of External AI Workloads in AI-RAN Implementation of Dynamic Resource Control with the AITRAS Orchestrator

3. AI-RAN Alliance Platform and Infrastructure Orchestrator White Paper

4. Dynamic Scoring Framework (GitHub)

5. OCM Policy framework